Microservices Design Patterns on AWS: 10 Patterns That Actually Matter in 2026

Quick summary: A curated, production-tested guide to microservices patterns on AWS — what to use, what to skip, and what changed in 2026 (App Mesh EOL, VPC Lattice, Powertools idempotency, Step Functions sagas).

Key Takeaways

- A curated, production-tested guide to microservices patterns on AWS — what to use, what to skip, and what changed in 2026 (App Mesh EOL, VPC Lattice, Powertools idempotency, Step Functions sagas)

- A curated, production-tested guide to microservices patterns on AWS — what to use, what to skip, and what changed in 2026 (App Mesh EOL, VPC Lattice, Powertools idempotency, Step Functions sagas)

Table of Contents

Most “microservices patterns” articles read like a museum tour. They list patterns that were essential in 2016 — Eureka service registries, ZooKeeper-coordinated leader elections, hand-rolled Hystrix circuit breakers, AWS App Mesh sidecars — and stop there. The 2016 list is not wrong; it is incomplete and, in places, actively misleading for a team building on AWS in 2026.

This guide is the opposite. It curates the microservices patterns that still pull weight on AWS today, calls out the ones that have been quietly replaced, and adds two patterns most lists miss entirely (Outbox and Idempotency) without which your distributed system will silently lose or duplicate data.

If you are still deciding whether to adopt microservices at all, start with microservices vs monolith on AWS. If you have already committed, keep reading.

What Changed Between 2016 and 2026

Three shifts made the old microservices playbook stale:

- Managed services replaced custom infrastructure. You do not run a Eureka or Consul cluster anymore — AWS Cloud Map, ECS Service Connect, and VPC Lattice handle service discovery. You do not write Hystrix circuit breakers — the AWS SDK has adaptive retries and most service meshes implement them at the proxy layer.

- AWS App Mesh is deprecated. AWS announced end-of-support for App Mesh on September 30, 2026. New microservices systems on AWS should not adopt App Mesh. The replacements are Amazon VPC Lattice (for inter-service connectivity across VPCs and accounts) and Istio or Linkerd on EKS (for traditional service mesh features).

- Reliability moved into the application layer. Idempotency keys, the Outbox pattern, and Saga orchestration are no longer optional add-ons — they are the difference between a system that works and a system that silently corrupts data under load. AWS Lambda Powertools and Step Functions made these patterns easy enough that there is no excuse to skip them.

With that context, here are the patterns that matter.

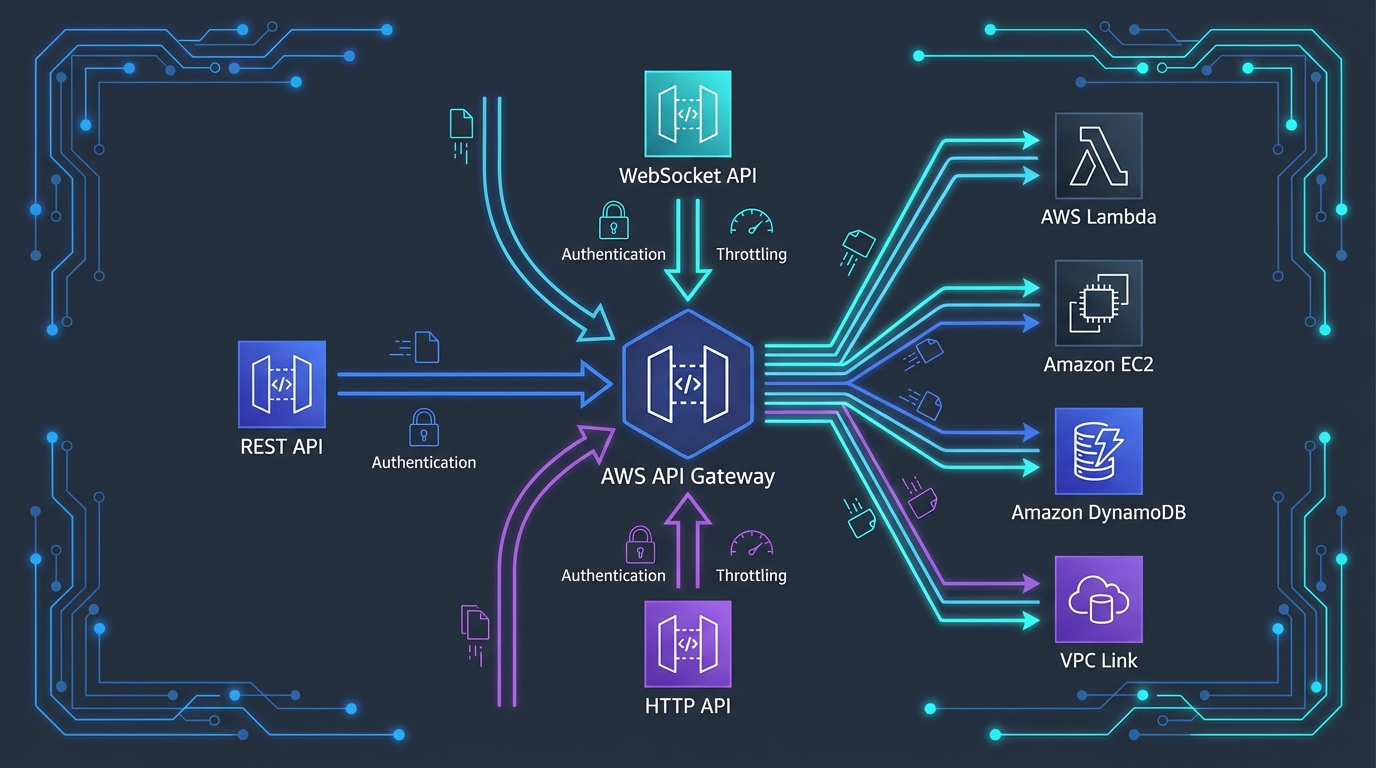

Pattern 1: API Gateway and Backend for Frontend (BFF)

A single edge entry point that routes external traffic to internal services, handles authentication, throttling, and request transformation.

Why it still matters: Without an API gateway, every microservice has to reimplement auth, rate limiting, CORS, and request validation. With one, those concerns live in one place and your services stay focused on business logic.

On AWS: Use Amazon API Gateway (HTTP APIs preferred over REST APIs for most cases) for public APIs, or Application Load Balancer for internal HTTP traffic. CloudFront sits in front for caching and DDoS protection.

The BFF evolution: A single shared API gateway becomes a bottleneck once you have multiple frontend types (web, mobile, partner integrations). Each frontend has different aggregation needs — mobile wants compact payloads, web wants full data, partners want batch endpoints. The Backend-for-Frontend pattern gives each consumer its own thin gateway:

Web App → Web BFF (Lambda) →┐

├→ User Service

Mobile App → Mobile BFF (Lambda) →┤ Order Service

│ Payment Service

Partner API → Partner BFF (Lambda) →┘Each BFF aggregates and shapes data for its specific client. You can change the mobile BFF without affecting the web BFF, and the underlying services stay stable.

Avoid: Putting business logic in the API Gateway. The gateway should route, authenticate, and rate-limit. The moment it starts doing data joins or domain logic, you have moved into BFF territory and should split.

Pattern 2: Service Discovery (the 2026 Way)

How services find each other without hardcoded IPs or DNS that goes stale during deployments.

What changed: Eureka, Consul, and ZooKeeper service registries are no longer the default on AWS. The platform now provides this:

| Use case | AWS service |

|---|---|

| Service-to-service inside one ECS cluster | ECS Service Connect (managed Envoy, automatic DNS) |

| Service discovery across ECS, EKS, Lambda | AWS Cloud Map (DNS-based or API-based) |

| Cross-VPC, cross-account, cross-cluster | Amazon VPC Lattice |

| EKS-internal | Kubernetes DNS (CoreDNS) plus headless services or Istio |

On AWS: For most teams running ECS or EKS, ECS Service Connect or Kubernetes DNS handles intra-cluster discovery. The moment you cross VPC or account boundaries — common in multi-team environments — VPC Lattice is the lowest-friction option.

Avoid: Running your own service registry. Operating Consul or ZooKeeper for service discovery in 2026 is undifferentiated work.

Pattern 3: Database per Service (with the part most lists skip)

Each service owns its own data store. No service reaches into another service’s database — communication is through APIs or events.

Why it still matters: Shared databases are the #1 reason “microservices” projects become distributed monoliths. Two services that share a table are coupled at the schema level — neither can evolve independently, and a slow query in one service degrades the other.

On AWS: Mix data stores by service needs. A common pattern:

- User service → Aurora PostgreSQL (relational, transactional)

- Catalog service → DynamoDB with single-table design (read-heavy, predictable access patterns)

- Search service → OpenSearch (full-text, faceted)

- Analytics service → S3 + Athena (cheap, columnar)

The part most lists skip — dual-write is dangerous. If your service updates a database and publishes an event, you have a dual-write problem: the database commit might succeed and the event publish might fail (or vice versa). Without a fix, your downstream services will silently drift out of sync. The fix is the Outbox pattern, covered next.

Pattern 4: The Outbox Pattern (the missing essential)

Reliably publishes events when a service changes its data, even if the message broker is down.

Why it matters: Without the Outbox pattern, every “microservices” system that publishes events is one network blip away from data inconsistency.

How it works:

- In the same database transaction that updates your business data, write the event to an

outboxtable. - A separate process reads new outbox rows and publishes them to your message bus (EventBridge, SNS, Kafka).

- Once published, the row is marked sent or deleted.

Because the business write and the outbox write are in the same local transaction, they cannot diverge. The publishing step retries forever until it succeeds.

On AWS, three implementations:

| Database | Outbox mechanism |

|---|---|

| DynamoDB | DynamoDB Streams → Lambda → EventBridge (no separate outbox table — the stream is the outbox) |

| Aurora | Aurora → DMS or AWS Database Activity Streams → Kinesis → Lambda → EventBridge |

| RDS | Outbox table polled by Lambda on a schedule, or Debezium on MSK Connect for change-data-capture |

The DynamoDB Streams variant is the cleanest — your write to DynamoDB is the durable event record, and the stream guarantees at-least-once delivery to the consumer Lambda.

Avoid: Publishing events directly from application code without an outbox. The pattern looks like db.commit(); eventBus.publish(event) — and the gap between those two lines is where data corruption lives.

Pattern 5: Saga Pattern (use Step Functions, not handwritten state machines)

Coordinates a distributed transaction across multiple services, with compensating actions if a step fails.

Why it matters: A two-phase commit across microservices does not exist on AWS. The Saga pattern is the practical replacement: instead of locking everything until the transaction commits, you run a sequence of local transactions, and if any step fails, you run compensating actions to undo the previous steps.

Classic example — order placement:

- Reserve inventory (compensation: release inventory)

- Charge payment (compensation: refund payment)

- Schedule shipment (compensation: cancel shipment)

- Send confirmation email (no compensation — best-effort)

If step 3 fails, the saga runs compensations for steps 2 and 1.

Choreography vs orchestration:

- Choreography — Each service listens to events and decides what to do. No central coordinator. Uses EventBridge or SNS.

- Orchestration — A central coordinator drives the saga and tracks state. Uses AWS Step Functions.

The 2026 default: orchestration via Step Functions. Choreography sounds elegant but becomes a debugging nightmare past three or four steps — there is no single place to see “what is the state of order 12345?”. Step Functions gives you the state machine, the visual debugger, the built-in retries and catch handlers, and an audit trail. Use choreography only for simple fire-and-forget fan-out (a new order triggers analytics + recommendations + audit logging in parallel — none of them depend on each other).

On AWS: Step Functions Standard workflow for sagas (Express is too short and lacks the durability guarantees you need for compensations).

Pattern 6: Idempotency (the second missing essential)

Makes operations safe to retry without causing duplicate side effects.

Why it matters: Distributed systems retry. Networks drop packets. Lambda retries on error. SQS redelivers. EventBridge can deliver the same event twice. Without idempotency, every retry is a potential duplicate charge, duplicate email, or duplicate inventory deduction.

The pattern:

- The caller generates an idempotency key (a UUID, or a deterministic hash of the request).

- The service stores the key with the result of the first execution.

- On any retry with the same key, the service returns the stored result without re-executing.

On AWS:

- Lambda: Use the AWS Lambda Powertools idempotency utility (Python, TypeScript, Java, .NET). It handles the key extraction, DynamoDB persistence, and TTL automatically.

- Step Functions: Pass an idempotency key into each task and let the downstream Lambda handle de-duplication.

- DynamoDB: Conditional writes with

attribute_not_exists(idempotency_key)give you idempotency cheaply for direct table operations.

Where to apply it (in priority order):

- Anything that takes money (payments, charges, refunds)

- Anything that emails or notifies users

- Anything that mutates external state (creates a customer in Stripe, posts to a third-party API)

- Any Lambda triggered by SQS, EventBridge, or DynamoDB Streams (all of which deliver at-least-once)

Avoid: Treating idempotency as an “if we have time” task. The retries are happening whether you handle them or not.

Pattern 7: Circuit Breaker and Smart Retries

Stops a failing dependency from taking down its callers, and retries transient failures intelligently.

What changed in 2026: You should not be writing circuit breakers from scratch. Three layers handle it:

- AWS SDK adaptive retry mode — handles transient errors with exponential backoff and jitter automatically. Set the SDK retry mode to

adaptiveand you get this for free on every AWS API call. - Service mesh / VPC Lattice — implements circuit breaking at the proxy level for east-west service traffic. No application code changes.

- Lambda Powertools

retryutility / Step Functions Retry blocks — declarative retry configuration with exponential backoff and jitter.

The retry math that matters:

- Always use jitter. Synchronous retries from many clients cause thundering herds. AWS recommends full jitter:

sleep = random(0, base * 2^attempt). - Set a retry budget. Don’t retry forever. 3 attempts with exponential backoff is a sane default for synchronous calls.

- Don’t retry non-idempotent operations unless you have an idempotency key (Pattern 6).

Circuit breaker rule of thumb: When 50%+ of requests to a dependency fail in a 30-second window, open the circuit (fail fast for 30 seconds), then half-open and probe. The exact thresholds matter less than having any breaker at all — the failure mode you are preventing is “every thread is blocked waiting on a dead service.”

Avoid: Hand-rolled circuit breakers in your service code in 2026. They are fragile, hard to test, and duplicate work the platform now does.

Pattern 8: Bulkhead Isolation

Isolates failure domains so a problem in one part of the system cannot consume resources used by another.

Why it still matters: Resource pools are the silent killer of microservices. If service A and service B both call dependency C, and C slows down, both services’ threads pile up waiting on C. Eventually they exhaust the connection pool — and now A and B are down too, even though only C had a problem.

On AWS:

| Resource | Bulkhead implementation |

|---|---|

| Lambda concurrency | Reserved concurrency per function — prevents one runaway function from starving others |

| ECS task placement | Per-service capacity providers and task-level resource limits |

| Database connections | RDS Proxy with connection pinning per service, or per-service connection pools |

| SQS queues | Per-consumer queues, separate dead-letter queues |

| Thread pools (in app) | Separate thread pools per dependency (e.g., one for the database, one for HTTP) |

The Lambda example: Without reserved concurrency, a misbehaving function can consume your entire account-level concurrency limit (1000 by default), starving every other Lambda. Reserve concurrency on critical functions (payment-processor: 200) so the rest of the account cannot starve them, and cap risky functions (bulk-importer: 50) so they cannot starve the rest.

Pattern 9: Strangler Fig (the safe path off a monolith)

Gradually replaces a monolith by routing specific functionality to new microservices, leaving the monolith running until every piece is migrated.

Why it still matters: Big-bang rewrites fail roughly always. The strangler fig pattern is the only migration approach with a track record of working on real systems.

The mechanics:

- Put a router (API Gateway, ALB, or proxy) in front of the monolith.

- For each new feature or extracted module, deploy a new microservice and update the router to send matching traffic to it instead of the monolith.

- Over time, the monolith shrinks. When it is small enough, retire it.

On AWS, the typical setup:

Client → Route 53 → CloudFront → ALB

├─ Path: /users/* → Monolith (ECS)

├─ Path: /orders/* → New Order Service (ECS)

└─ Path: /payments/* → New Payment Service (Lambda)We have a step-by-step walkthrough of this approach in how to migrate a monolith to ECS Fargate with zero downtime.

The key trap: Data migration. Splitting a route is easy; splitting a database is hard. Plan your data migration before your traffic migration. Often the right sequence is: extract the service to its own database with two-way sync, run both for a while, then cut over reads, then writes, then turn off the sync.

Pattern 10: Sidecar / Service Mesh (post-App-Mesh)

A helper container deployed alongside each service to handle cross-cutting concerns — observability, mTLS, traffic shaping, retries, circuit breaking.

What changed in 2026: AWS App Mesh reaches end-of-support on September 30, 2026. Do not start new projects on App Mesh. The current options:

| Option | Best for |

|---|---|

| Amazon VPC Lattice | Cross-VPC, cross-account, cross-compute (ECS + Lambda + EC2) connectivity |

| Istio on EKS | Full-featured service mesh with mTLS, fine-grained traffic policy, broad ecosystem |

| Linkerd on EKS | Lightweight alternative to Istio with simpler operations |

| ECS Service Connect | Inside one ECS cluster, simpler than a full mesh |

| Application code (no mesh) | Small systems where the operational cost of a mesh exceeds the value |

The honest take: Most teams under 30 engineers do not need a service mesh. The mesh adds operational complexity (more sidecars to monitor, more failure modes) that pays off only at scale. Start with VPC Lattice or ECS Service Connect for inter-service connectivity, and reach for Istio when you actually need fine-grained traffic policy or zero-trust mTLS at scale.

The sidecar pattern still matters even without a mesh — observability agents (CloudWatch agent, OpenTelemetry collector), log shippers, and secrets-rotation agents all run as sidecars. The pattern is alive; the App Mesh implementation is not.

Patterns to Use Selectively, Not by Default

Three patterns from the classic list are real but are also the most overused. They are powerful when the use case fits and a footgun otherwise.

Event Sourcing

Stores every change to state as a sequence of immutable events. The current state is derived by replaying events.

Use it when: You need a perfect audit trail (financial systems, regulatory compliance), or when the same domain events drive multiple read models (analytics, search, notifications) and you want one source of truth.

Don’t use it when: You just want “log all changes.” A regular audit log table or DynamoDB Streams gives you that without forcing your entire data model to be event-shaped. Event sourcing is a commitment to a different programming model — make it deliberately, not by accident.

CQRS (Command Query Responsibility Segregation)

Splits write models from read models. Writes go through one path optimized for transactional consistency; reads come from a separate path optimized for query performance.

Use it when: Read load dwarfs write load by 10x or more, or read patterns differ wildly from write patterns (writes are simple updates; reads are complex aggregations).

Don’t use it when: Your reads and writes have similar patterns and similar load. CQRS doubles the moving parts in exchange for read scalability — that trade is good only when you actually have a read scalability problem.

API Composition

A separate service aggregates data from multiple microservices into a single response.

Why it is mostly subsumed by BFF (Pattern 1): API composition was popular before BFFs were common. In 2026, the same problem (“we need data from three services in one response”) is usually solved with a BFF — a thin per-client gateway that does the composition. If you find yourself building a generic composition service used by many clients, consider whether it is actually a BFF in disguise.

Patterns We Removed from the Old List (and Why)

| Old pattern | Why it’s gone in 2026 |

|---|---|

| Two-phase commit | Doesn’t work across microservices on AWS. Sagas (Pattern 5) replace it. |

| Eureka / Consul / ZooKeeper service discovery | Replaced by AWS Cloud Map, ECS Service Connect, VPC Lattice (Pattern 2). |

| Hystrix-style hand-rolled circuit breakers | Replaced by SDK adaptive retries and mesh-level breakers (Pattern 7). |

| Shared database with table-level locks | The thing we are trying to escape — shared databases are how microservices become distributed monoliths. |

| Synchronous chains of more than 2-3 services | Bad design at any scale. Use events or orchestration instead. |

| AWS App Mesh | End-of-support 2026-09-30. Migrate to VPC Lattice, ECS Service Connect, or Istio on EKS. |

How to Choose Patterns for Your System

Patterns are tools, not laws. Use this rough decision order:

- Are you doing microservices at all? Re-read microservices vs monolith on AWS. For under 5 engineers, the answer is usually no.

- Start with the table stakes. API Gateway (Pattern 1), Database per Service (Pattern 3), Idempotency (Pattern 6), and Smart Retries (Pattern 7) — these are not optional in production.

- Add Outbox the moment you publish an event. Otherwise you have a dual-write bug waiting to fire.

- Add Saga the moment you have a multi-step transaction across services. Use Step Functions, not handwritten state machines.

- Add Bulkhead before you need it. Reserved Lambda concurrency and per-service connection pools cost almost nothing and prevent the worst class of cascading failures.

- Reach for Service Mesh, Event Sourcing, or CQRS only with a specific reason. They are powerful and expensive — you should be able to name the problem they solve in your system, not adopt them because they are on a list.

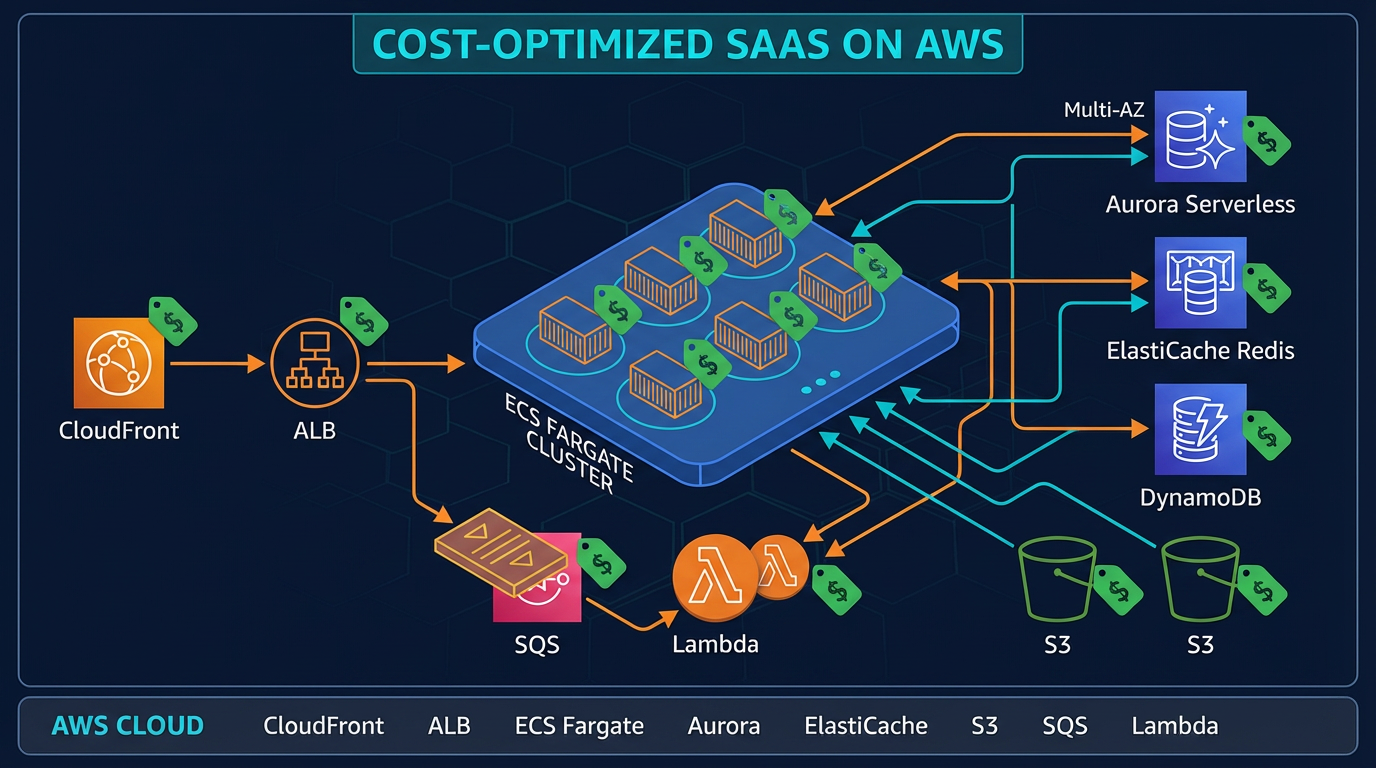

A Realistic Reference Architecture

Pulling it together, a modern AWS microservices system in 2026 looks roughly like this:

CloudFront → WAF

↓

API Gateway / ALB

│ │

┌──────┴────┐ ┌────┴───────┐

│ Web BFF │ │ Mobile BFF │ ← Pattern 1

└──────┬────┘ └────┬───────┘

│ │

┌──────────────┼────────────┼────────────────┐

│ │ │ │

User Service Order Service Payment Service Notification Service

│ │ │ │

Aurora PG DynamoDB Aurora PG (stateless)

│ │ │

│ DynamoDB Streams │

│ (Outbox) │ ← Pattern 4

│ ↓ │

└──────→ EventBridge ←─────┘ ← Choreography

↓

Step Functions Saga ← Pattern 5 (orchestration)

↓

Reserved Lambda concurrency ← Pattern 8

↓

Powertools idempotency ← Pattern 6

Discovery: ECS Service Connect / VPC Lattice ← Pattern 2

Mesh: Istio on EKS (only if needed) ← Pattern 10

Migration: Strangler Fig at the ALB ← Pattern 9That covers eight of the ten patterns. CQRS and Event Sourcing are not in the diagram on purpose — they belong in specific services that need them, not in the platform default.

Closing Thought

The microservices patterns that survived to 2026 are the ones that solve a specific failure mode: dual writes (Outbox), retries causing duplicates (Idempotency), distributed transactions without 2PC (Saga), cascading failures (Bulkhead, Circuit Breaker), and migrations off monoliths (Strangler Fig). The patterns that fell away — Eureka registries, Hystrix breakers, App Mesh sidecars — fell away because AWS-managed services or the SDK now handle them better.

If you adopt the ten patterns in this guide on a system that is genuinely large enough to need microservices, you will spend most of your time on business logic instead of plumbing. That is what microservices were always supposed to enable.

For organizations modernizing legacy applications into microservices on AWS, see our AWS Application Modernization services. For architecture review of an in-progress microservices system, see AWS Architecture Review. For serverless microservices implementation guidance, see AWS Serverless Architecture Services.

Talk to our AWS architects about your microservices design →

AWS Cloud Architect & AI Expert

AWS-certified cloud architect and AI expert with deep expertise in cloud migrations, cost optimization, and generative AI on AWS.