Amazon Bedrock Now Offers OpenAI Models, Codex, and Managed Agents: What It Means for Enterprise AI

Quick summary: AWS just made OpenAI's frontier models, Codex, and production-ready Managed Agents available inside Amazon Bedrock — wrapped in IAM, PrivateLink, Guardrails, and CloudTrail. Here is what changes for CTOs evaluating OpenAI direct vs. AWS.

Key Takeaways

- AWS just made OpenAI's frontier models, Codex, and production-ready Managed Agents available inside Amazon Bedrock — wrapped in IAM, PrivateLink, Guardrails, and CloudTrail

- AWS

- AWS just made OpenAI's frontier models, Codex, and production-ready Managed Agents available inside Amazon Bedrock — wrapped in IAM, PrivateLink, Guardrails, and CloudTrail

- AWS

Table of Contents

On April 28, 2026 AWS announced that Amazon Bedrock now offers OpenAI’s latest models, the Codex coding agent, and a new class of Bedrock Managed Agents powered by OpenAI — all in Limited Preview. The accompanying partnership announcement frames it as bringing “frontier intelligence to the infrastructure you already trust.”

For CTOs and platform teams that have been running parallel workloads on OpenAI’s API and Bedrock, the procurement story, the security story, and the agent-runtime story all collapse into a single AWS-governed surface. This post breaks down what was announced, what genuinely changes, and what to do this quarter — written from the perspective of an AWS Select Tier Consulting Partner deploying production GenAI for healthcare, SaaS, and ecommerce clients.

Note on Limited Preview: Specific OpenAI model SKUs, region availability, on-demand pricing, and GA timing are not yet publicly disclosed. Treat any architecture you commit to as preview-tier until AWS publishes a stable model catalog. Box CTO Ben Kus is the only customer named in the announcement.

TL;DR for busy CTOs

- OpenAI’s frontier models are now first-class citizens inside Amazon Bedrock, alongside Claude, Llama, Mistral, and Nova.

- Codex — the coding agent used weekly by 4M+ developers — runs on Bedrock through the same CLI, desktop app, and VS Code extension developers already know, but authenticates with AWS credentials instead of personal OpenAI keys.

- Bedrock Managed Agents, powered by OpenAI are production-grade agents with persistent memory, skills, an IAM identity, and AgentCore compute — AWS handles the runtime.

- Enterprise controls are baked in: IAM, PrivateLink, Guardrails, encryption, CloudTrail — and each agent runs with its own identity and audit log.

- OpenAI usage counts toward existing AWS cloud commitments, so OpenAI spend rolls into the AWS bill rather than a parallel procurement.

- The “Bedrock vs. OpenAI API” decision matrix — including our existing comparison — has shifted. For most regulated enterprises, Bedrock is now the default path to OpenAI, not an alternative to OpenAI.

What AWS actually announced (April 28, 2026)

The announcement bundles three offerings that arrive together but solve different problems:

1. OpenAI frontier models on the Bedrock API

OpenAI’s latest models are accessible through the Bedrock InvokeModel and Converse APIs alongside Anthropic Claude 4.6, Meta Llama 4, Amazon Nova, Mistral, DeepSeek, Qwen, and the rest of the ~100-model Bedrock catalog. For application teams, this means you can swap providers behind the same SDK call — and Bedrock-native features like Knowledge Bases for RAG, Guardrails, and Flows for orchestration compose with OpenAI models the same way they compose with Claude.

2. Codex on AWS credentials

Codex — OpenAI’s coding agent — is available through the Bedrock API via three surfaces:

- Codex CLI for terminal-based agentic coding sessions

- Codex Desktop App for a longer-running workspace

- Codex VS Code extension for inline integration

The headline change is authentication. Developers sign in with AWS credentials (typically through IAM Identity Center / SSO), so each Codex session is attributable to a named human, scoped by IAM permissions, networked through your VPC, and logged to CloudTrail. The shadow-IT problem of personal OPENAI_API_KEY values in dotfiles goes away.

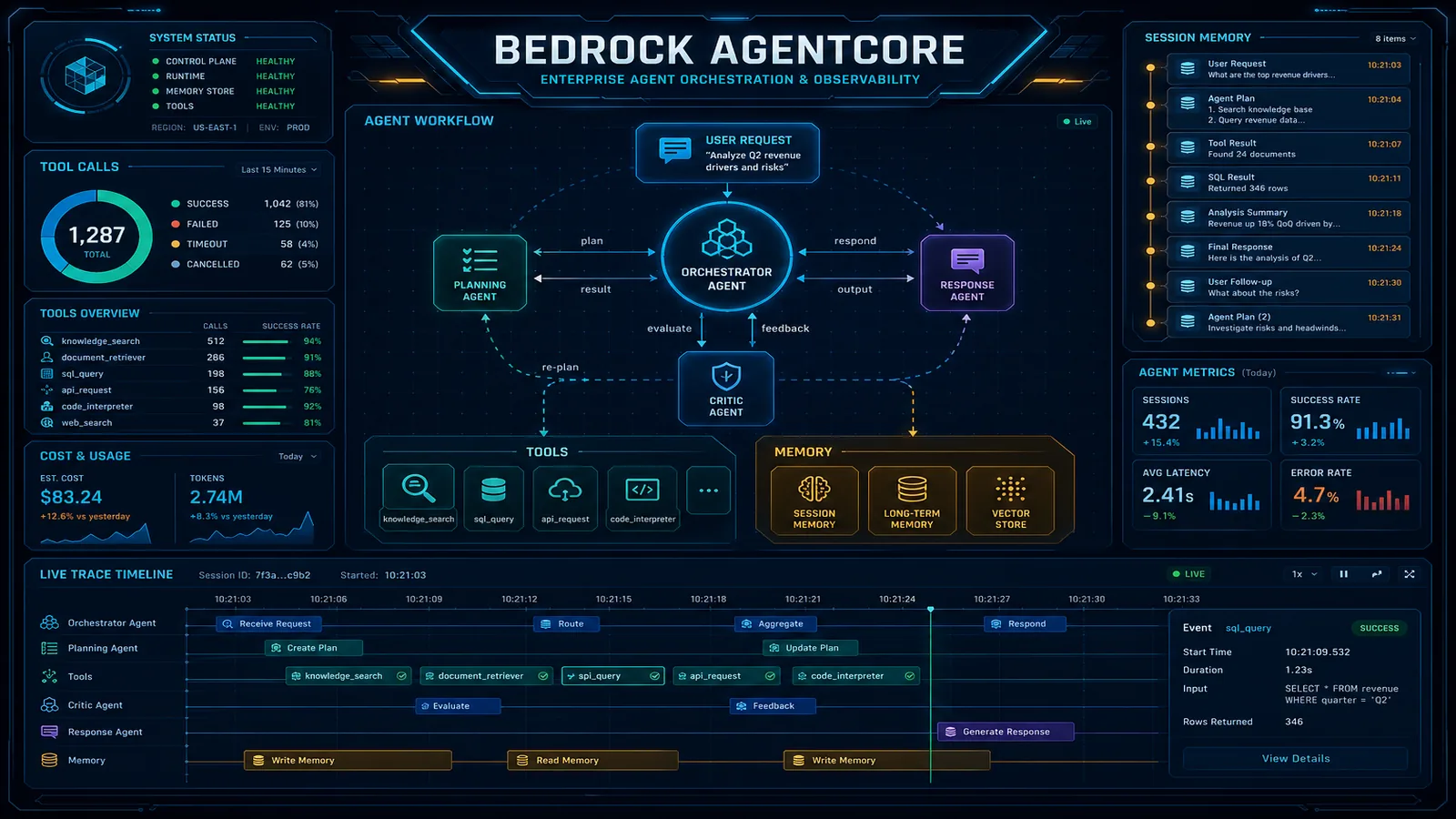

3. Bedrock Managed Agents, powered by OpenAI

This is the most strategic of the three. AWS now offers a fully managed agent runtime where the agent itself — model + framework + memory + tool routing — is operated by AWS on top of Amazon Bedrock AgentCore. According to AWS, Managed Agents include:

- Persistent memory across sessions

- Skills that encode procedures

- An identity that enforces permissions (so the agent inherits and respects IAM)

- Compute options suited to the task (default: AgentCore)

Box CTO Ben Kus, the only named partner in the announcement, describes the value as:

“With Amazon Bedrock Managed Agents, powered by OpenAI, developers can build optimized, production-scale AI applications that bring together the strengths and capabilities of OpenAI’s latest models with the scale, security, and infrastructure of AWS.”

For teams that have already shipped a Bedrock Agent using Claude, the comparison is direct: Managed Agents take you one rung higher in abstraction. You give up some control over the agent loop in exchange for AWS owning the runtime.

OpenAI frontier models on Bedrock: what’s different from the direct API

If your team already calls OpenAI’s API in production, here is what genuinely changes when the same call goes through Bedrock instead:

| Concern | OpenAI direct | OpenAI on Bedrock |

|---|---|---|

| Authentication | Per-developer or per-app API key | AWS IAM, IAM Identity Center, IAM Roles |

| Network path | Public internet to api.openai.com | VPC + AWS PrivateLink option |

| Compliance posture | OpenAI’s own attestations | Inherits Bedrock’s HIPAA-eligible, SOC 2, FedRAMP-in-process posture |

| Audit logging | OpenAI dashboard / your own logging | CloudTrail, native |

| Content safety | OpenAI moderation API (separate) | Bedrock Guardrails (composable) |

| Billing | Separate OpenAI invoice | AWS bill, applies to commitments |

| Model lifecycle | OpenAI deprecation calendar | OpenAI deprecation surfaced through AWS region/SKU lifecycle |

| Region/data residency | OpenAI’s regions | AWS regions (subject to preview availability) |

The compliance and billing rows are typically what tip the decision. A healthcare customer running a HIPAA-eligible workload could not put OpenAI’s direct API in the architecture without a separate BAA conversation. The same model, accessed through Bedrock, sits inside the AWS BAA they already signed.

Codex on AWS credentials: what dev teams gain

Codex through Bedrock is the same Codex developers have been using — same CLI, same VS Code extension, same agentic coding behavior — wired to a different identity provider. Three concrete improvements show up immediately:

1. Identity and offboarding actually work. When a developer leaves, you remove their IAM Identity Center user and their Codex access goes with it — no hunting for stranded API keys in ~/.config. New hires get Codex through the same SSO group membership that already grants them access to AWS Console, S3, and the rest of the toolchain.

2. Per-developer audit trail. Every Codex invocation can be attributed to a human via CloudTrail. For regulated environments — especially in financial services — this is the difference between “we use AI coding assistants” and “we use AI coding assistants and can prove who did what.”

3. Network and data path control. Codex traffic can flow over AWS PrivateLink. For customers with policies against sending source code over the public internet to model providers, this materially changes what is allowed.

The trade-off: you lose the personal-key escape hatch developers have been using to ignore corporate restrictions. That is the point.

Bedrock Managed Agents, powered by OpenAI

Managed Agents sit one level above the DIY Bedrock Agent pattern we documented earlier this month. The DIY pattern gives you control: you define action groups, write Lambda tool implementations, set instructions, and own the agent loop. Managed Agents, by contrast, deliver the agent runtime as a service — AWS hosts and scales the loop on AgentCore, and you focus on tools, skills, and memory configuration.

In practice:

- AgentCore provides the default compute environment and is positioned as the open platform for “constructing, linking, and scaling agents across any model and framework.”

- Persistent memory survives session boundaries — the agent remembers context across days, not just inside a single chat.

- Skills encode procedures the agent can invoke, similar to action groups but framework-managed.

- Identity is enforced through IAM, so an agent’s blast radius is bounded by the IAM role it runs under, not by trust in the model.

When does this beat building it yourself with Bedrock Agents + Claude? Three patterns we expect to dominate:

- Coding assistants beyond Codex. Internal copilots that go past inline completion — agents that take a Jira ticket, branch the repo, draft a PR, and request review.

- Long-running enterprise workflows. Multi-day processes (procurement, onboarding, claims triage) where session-spanning memory matters more than the per-call latency.

- Cross-system orchestration. Agents that coordinate across Salesforce, ServiceNow, and S3 where AWS owning the runtime simplifies the operational story.

For agents that need tight control over the loop (deterministic guardrails, custom routing logic, very specific tool sequencing), the DIY Bedrock Agents path and Bedrock Flows remain the right tools.

Enterprise controls baked in

The same controls that have made Bedrock the default GenAI platform for regulated industries apply to OpenAI models, Codex, and Managed Agents the moment they enter the platform:

- IAM and IAM Identity Center for least-privilege access — including for individual developers using Codex and individual agents acting on behalf of services

- AWS PrivateLink for VPC-private connectivity, so model traffic never traverses the public internet

- Bedrock Guardrails for topic denial, PII detection and redaction, harmful content filters, and grounding checks — composable in front of OpenAI calls the same way they compose with Claude

- Encryption at rest (KMS) and in transit (TLS)

- CloudTrail logging for every API call, every Codex action, and every Managed Agent step

- Per-agent identities and per-action logs, which AWS calls out specifically for Managed Agents — so an agent doing autonomous work has the same observability as a human user

For teams that have already invested in Bedrock Guardrails and a VPC-private architecture, OpenAI workloads slot into the same controls without re-architecting.

How this reshapes the “Bedrock vs. OpenAI API” decision

We published AWS Bedrock vs. OpenAI API: Enterprise Decision Guide 2026 earlier this month. The framing was: pick OpenAI direct for raw capability and OpenAI familiarity, pick Bedrock for compliance, model choice, and AWS-native integration. That trade-off just narrowed.

Today, the more accurate framing for a regulated enterprise is:

| If you want… | Pre-announcement | Post-announcement |

|---|---|---|

| OpenAI’s frontier models | OpenAI direct API | Bedrock (preview) |

| HIPAA-eligible OpenAI usage | Not viable | Bedrock |

| OpenAI spend on AWS commitments | Not possible | Bedrock |

| Codex with corporate SSO | Personal keys + workarounds | Bedrock |

| Managed agent runtime on OpenAI | DIY on EC2 / ECS | Bedrock Managed Agents |

| Lowest-latency raw OpenAI calls outside AWS | OpenAI direct API | OpenAI direct API |

| Earliest access to brand-new OpenAI features | OpenAI direct API | OpenAI direct API (then Bedrock) |

OpenAI direct still wins for two profiles: teams whose primary workload is outside AWS (Azure-first, GCP-first, or on-prem), and teams that need bleeding-edge model features the day they ship. For everyone else with a meaningful AWS footprint, Bedrock is now the default path to OpenAI rather than a substitute for OpenAI.

Cost and commitment math

The economics deserve their own paragraph because this is where most of the boardroom decisions get made.

- Spend consolidation. OpenAI usage on Bedrock is consumed under your AWS account and applied toward your existing AWS cloud commitments. If you are already on an Enterprise Discount Program or a private pricing agreement, OpenAI spend now stretches that commitment instead of competing with it.

- One invoice, one procurement. Vendor management drops from “OpenAI + AWS” to “AWS.” Legal, finance, and security review cycles compress accordingly.

- Savings Plan adjacency. While AWS has not announced a Savings Plan specifically for OpenAI tokens, Bedrock has historically introduced provisioned throughput / committed-use options for high-volume models. We expect a similar mechanism over time.

- Hidden cost: Limited Preview risk. Pricing is not yet published. Build pilots assuming on-demand rates that may move, and avoid committing to a spend forecast until AWS publishes the rate card.

For broader cost discipline on Bedrock workloads, see our token budgets and model selection playbook.

What to do this quarter

Three concrete tracks, in priority order:

1. Register for the Limited Preview — properly. AWS gates preview access by account standing, region, and workload fit. Submit a request with a real workload description (token volume, region, security posture) rather than a generic “we want access.” This is exactly the kind of preparation FactualMinds runs for clients in week one.

2. Audit your existing OpenAI usage. Inventory every place an OPENAI_API_KEY lives in your code, your CI, your developer laptops, and your SaaS integrations. You will need this map the moment Bedrock OpenAI access is granted, and you will need it sooner regardless — because the moment your CFO sees that Bedrock OpenAI counts toward AWS commitments, the consolidation conversation starts.

3. Plan an agent pilot on AgentCore. Pick a single high-value, well-bounded workflow (a coding agent on a specific repo, a procurement agent on a specific catalog, a triage agent on a specific queue). Scope a 4–6 week pilot once preview access lands. Treat it as the test fixture for whether Managed Agents replace your DIY agent code, augment it, or coexist.

When to wait, when to pilot

Pilot now if: you are an AWS-first enterprise, you have a regulated workload that has been waiting for an OpenAI option, your developers are already running Codex with personal keys, or you have a long-running agent workload where AWS owning the runtime would meaningfully reduce ops burden.

Wait if: your primary cloud is Azure or GCP, your workload requires the absolute latest OpenAI feature on day one, you have no AWS commitment to amortize against, or you have not yet adopted Bedrock at all (start with Knowledge Bases and Guardrails before reaching for managed agents).

A few things are still genuinely unknown as of late April 2026: which exact OpenAI model SKUs will be exposed, which AWS regions will host them at GA, the published on-demand token pricing, the SLAs around Managed Agents, and the AgentCore roadmap for authorization policies and agent discovery. Architect with abstractions (model routing layers, IAM-bounded agent identities) so you can absorb those answers as they land.

How FactualMinds helps

We are an AWS Select Tier Consulting Partner running production Bedrock deployments for healthcare, SaaS, and ecommerce clients. For the OpenAI-on-Bedrock launch specifically, we help teams with three things:

- Preview access strategy — preparing the request, the regions, the workload narrative, and the security posture AWS wants to see

- Migration from OpenAI direct — auditing existing OpenAI usage, mapping it to Bedrock equivalents, redirecting traffic without breaking applications

- Managed Agent pilots — scoping the first AgentCore-hosted agent, wiring IAM and Guardrails, instrumenting CloudTrail, and shipping in 4–6 weeks

If you are evaluating this announcement for your stack, see our AWS Bedrock consulting services or talk to our team.

Frequently asked questions

The FAQ block above answers the most common questions we are hearing this week:

- Which OpenAI models are available on Amazon Bedrock?

- How is Codex on Bedrock different from using Codex directly?

- What are Bedrock Managed Agents powered by OpenAI?

- Does OpenAI usage on Bedrock count toward AWS commitments?

- How do Bedrock Managed Agents handle security and auditability?

- How can my team get access to the Limited Preview?

For deeper architecture questions — model selection, agent topology, cost modeling, or migration sequencing — reach out and we will scope a working session with the FactualMinds Bedrock team.

AWS Cloud Architect & AI Expert

AWS-certified cloud architect and AI expert with deep expertise in cloud migrations, cost optimization, and generative AI on AWS.