From One FIS Experiment to a Resilience Program (2026): AWS Fault Injection Service, Stop Conditions, and GameDays That Actually Change Behavior

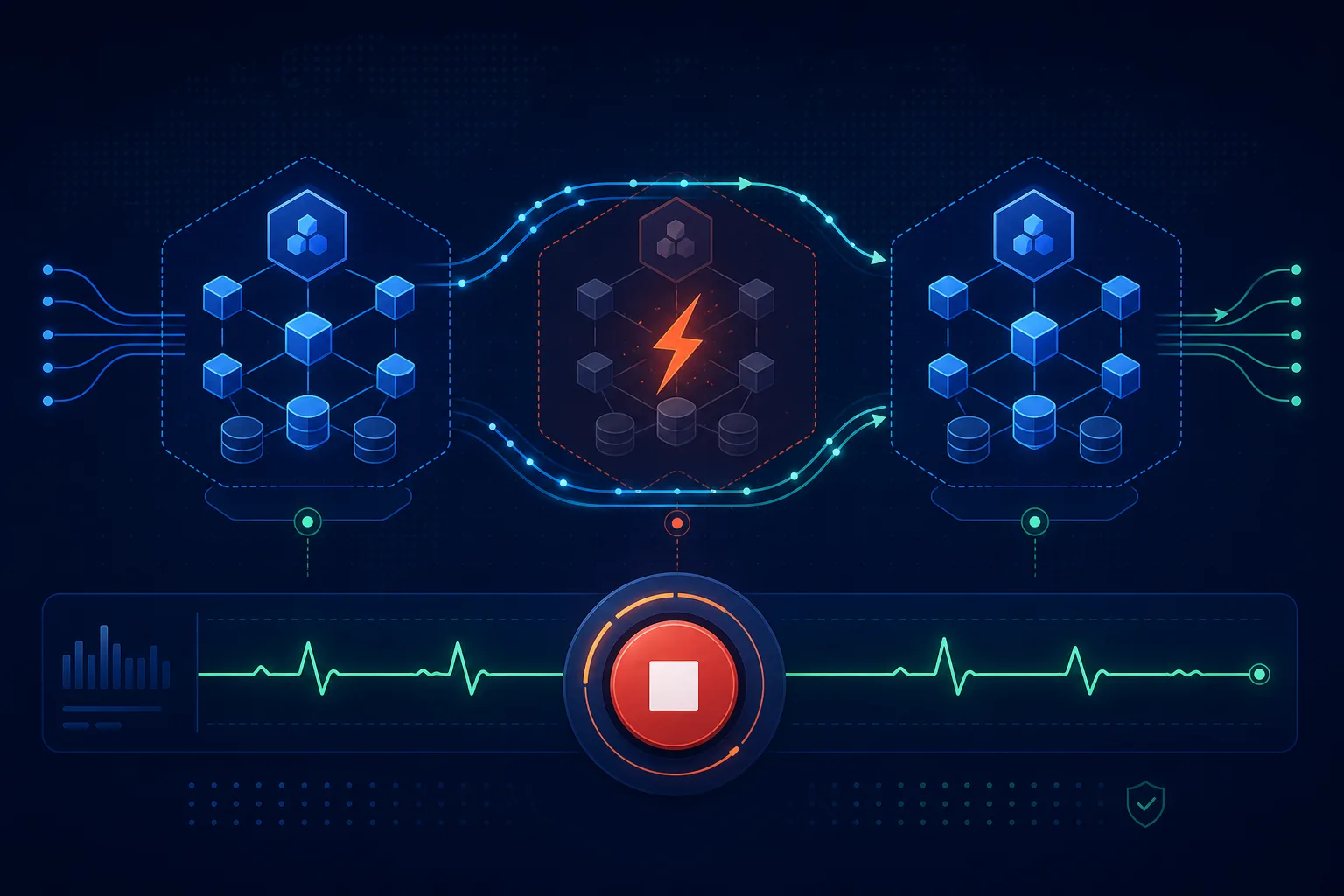

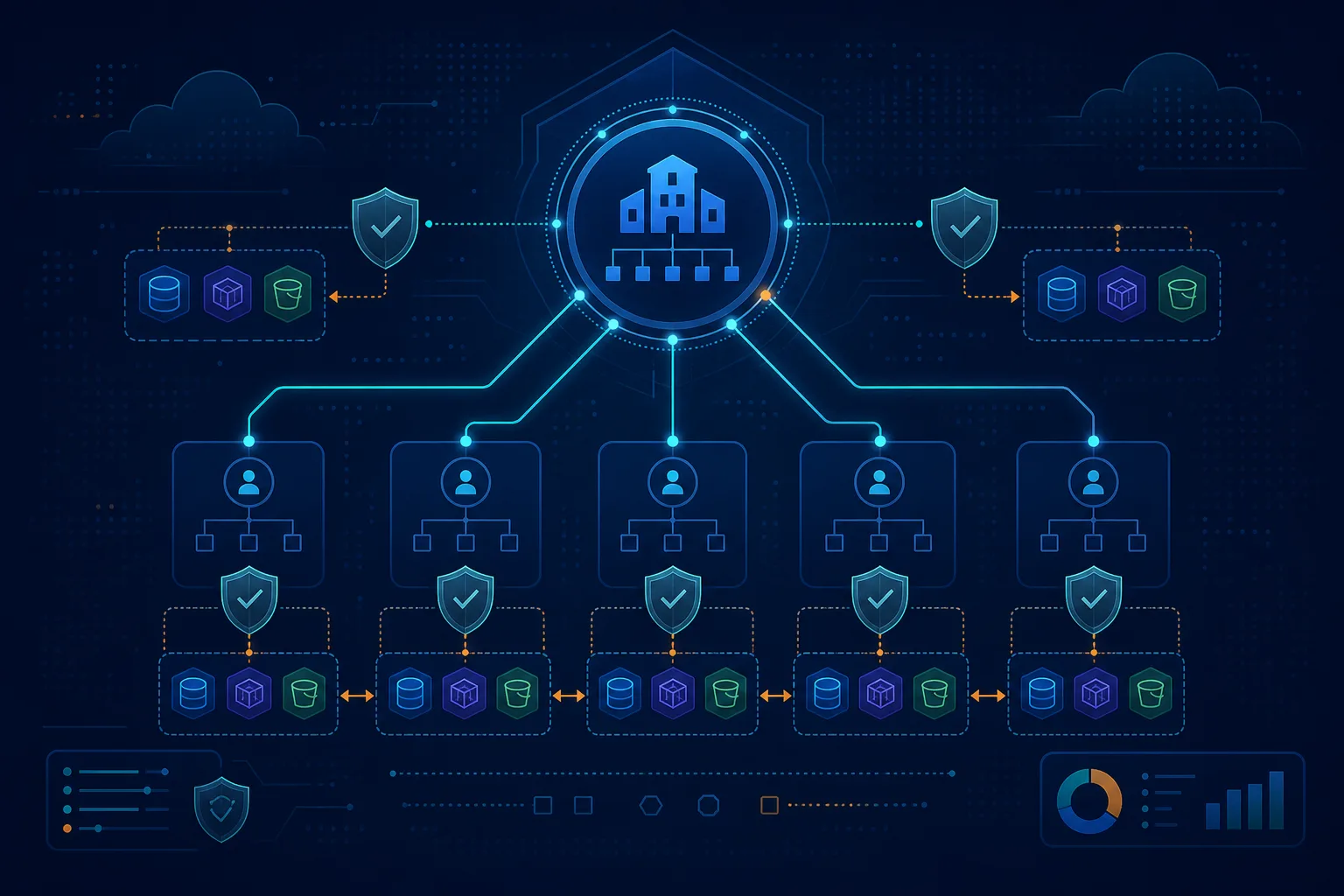

Running one AWS FIS experiment in a demo account is not chaos engineering — it is a screenshot. A program ties experiments to SLOs, scopes blast radius with tags, halts on CloudWatch alarm stop conditions, schedules via EventBridge, and closes the loop by re-testing the fix. FIS now ships AZ Power Interruption and cross-Region connectivity scenarios in its Scenario Library. Here is the L0→L3 maturity matrix, a GameDay runbook, and a stop-condition-wired experiment skeleton.