Event-Driven Boundaries on AWS: Async vs Sync, Amazon MSK vs Amazon MQ (RabbitMQ), and When SQS Wins

Quick summary: Standard SQS queues sustain nearly unlimited throughput per queue (AWS-documented pattern) while FIFO caps at 300 TPS per API batch without high-throughput mode—your May 2026 architecture review should start from those numbers, not from Kafka slogans.

Key Takeaways

- On May 8, 2026, the cheapest mental model for AWS buyers is still: sync paths spend latency budget for certainty; async paths spend operational complexity for resilience

- This post maps “Kafka vs RabbitMQ” debates to Amazon MSK and Amazon MQ (RabbitMQ engine)—and shows where neither belongs because SQS or EventBridge already matches the coupling you need

- Reproduce this — Inspect redrive policies and visibility timeouts with (AWS CLI v2

- 25+, installed)

- Sync vs async: decision sharp edges Stay synchronous when: - The caller must branch UX on the outcome now (form validation, payment authorization synchronous with PCI constraints)

Table of Contents

On May 8, 2026, the cheapest mental model for AWS buyers is still: sync paths spend latency budget for certainty; async paths spend operational complexity for resilience. AWS documents that standard SQS queues target nearly unlimited throughput (horizontal scaling behind the service), while FIFO queues without high-throughput mode are commonly planned around 300 transactions per second per API action batch—if your backlog math ignores that, capacity reviews fail in the boring way.

This post maps “Kafka vs RabbitMQ” debates to Amazon MSK and Amazon MQ (RabbitMQ engine)—and shows where neither belongs because SQS or EventBridge already matches the coupling you need.

Reproduce this — Inspect redrive policies and visibility timeouts with

examples/architecture-blog-2026/event-driven/inspect-sqs-redrive.sh(AWS CLI v2.25+,jqinstalled).

Sync vs async: decision sharp edges

Stay synchronous when:

- The caller must branch UX on the outcome now (form validation, payment authorization synchronous with PCI constraints).

- Total fan-out fits your API Gateway / ALB timeout envelope with margin.

Go asynchronous when:

- Downstream variance is high (ML inference, partner batch APIs).

- You need absorption (spiky producers, throttled consumers).

- You already proved idempotency—duplicate delivery is a when, not an if.

Opinionated take — If your “event-driven architecture” is still “Lambda calling Lambda synchronously through SDK invocations,” you built a distributed monolith with extra latency. Promote boundaries to queues or buses; keep the synchronous graph shallow.

Kafka (MSK) vs RabbitMQ (Amazon MQ) vs SQS

We already compared Kinesis Data Streams vs MSK in depth (streaming platform guide). Use that when bytes-per-second and stream retention dominate.

Choose Amazon MSK when:

- You need Kafka protocol compatibility (existing clients, Kafka Connect, transactions on brokers where supported).

- Stream log retention and consumer group mechanics are first-class requirements.

Choose Amazon MQ for RabbitMQ when:

- Teams standardize on AMQP, priority queues, or routing semantics that map naturally to Rabbit.

- You are migrating an on-prem broker with minimal client rewrites.

Choose SQS (+ SNS/EventBridge) when:

- You need managed fan-out without caring about broker protocol details.

- Per-message DLQ + redrive solves most failure handling (SQS production patterns).

What broke — A team imported a Kafka-shaped “commands” topic into SQS standard queues without ordering guarantees, assuming partition keys magically existed. Side effects executed out of order; financial adjustments diverged. Fix: FIFO with deduplication keys or stay on MSK where ordering is broker-enforced.

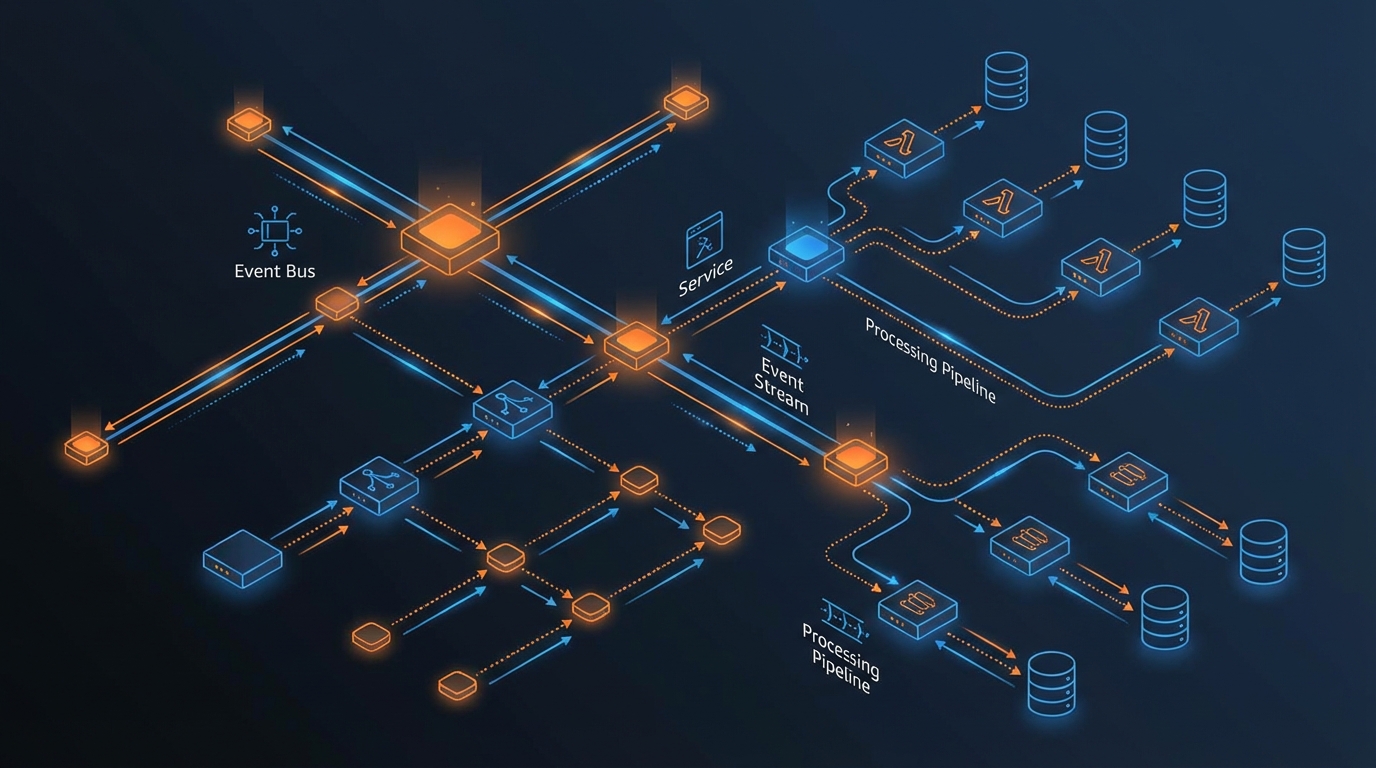

EventBridge and “domain buses”

EventBridge excels at discovery-friendly routing (rules, archival, replay with appropriate controls). Pair it with our EventBridge architecture patterns before inventing a bespoke bus topology.

Failure mode: wildcard rules that turn a bus into an accidental fan-out grenade—cap concurrency at targets and measure blast radius with cloud cost alarms.

Real-time pipelines: Kinesis + Lambda mental hook

When you need stream processing with Lambda consumers, our Kinesis + Lambda + DynamoDB pipeline illustrates throughput thinking end-to-end—use it as the bridge between “messaging” and “analytics inching.”

Orchestration: Step Functions as the adult in the room

Long-running sagas with explicit compensation should not live in “while loops around SQS.” Read Step Functions workflow patterns and model human approvals or external waits as first-class states.

What This Post Doesn’t Cover

- MQTT workloads → IoT Core diverges from MSK/MQ mental models; our IoT MQTT scaling patterns are the better rabbit hole.

- Exactly-once semantics fantasies—AWS services expose at-least-once defaults; design idempotency keys everywhere.

If You Only Do One Thing

Write the failure-mode paragraph on every async handoff: duplicate delivery, poison messages, and visibility timeout mishaps—before you paste architecture diagrams into slide decks.

What to Do This Week

- Export SQS attributes (visibility, DLQ, retention) for top 10 queues; fix any missing redrive policies on production workloads processing money.

- Re-read the MSK vs Kinesis decision guide with your actual RPS + retention + consumer list—not the vendor you prefer.

- Add EventBridge rule cardinality review to your change template (producer team + consumer service + max Lambda concurrency).

For ingress scale interactions (ALB + Lambda), tie back to ingress, load balancing, and elastic scale.

AWS Cloud Architect & AI Expert

AWS-certified cloud architect and AI expert with deep expertise in cloud migrations, cost optimization, and generative AI on AWS.