Production Resilience on AWS: Timeouts, Retries With Jitter, Circuit Limits, and Graceful Shutdown

Quick summary: API Gateway REST integrations still max out at 29 seconds—if your Lambda keeps retrying a 35-second partner HTTP call without a bounded circuit, you burn capacity and duplicate side effects instead of failing fast.

Key Takeaways

- Anchor with two hard AWS numbers every review should paste into runbooks: 1

- API Gateway REST integration timeout: 29 seconds (design partner calls accordingly—see HTTP vs WebSocket field notes)

- 2

- SQS visibility timeout must exceed handler p99 or you amplify duplicates—our SQS production patterns cover DLQs and redrive math

- Reproduce this — Run the Node 22 jitter reference: ( )

Table of Contents

On May 8, 2026, the dominant failure mode in resilient systems is still unbounded optimism: retries without jitter, timeouts longer than upstream gateways permit, and Lambdas that treat SQS at-least-once delivery as if it were exactly-once SQL.

Anchor with two hard AWS numbers every review should paste into runbooks:

- API Gateway REST integration timeout: 29 seconds (design partner calls accordingly—see HTTP vs WebSocket field notes).

- SQS visibility timeout must exceed handler p99 or you amplify duplicates—our SQS production patterns cover DLQs and redrive math.

Reproduce this — Run the Node 22 jitter reference:

examples/architecture-blog-2026/resilience/backoff-jitter.mjs(node backoff-jitter.mjs).

Timeouts: orchestrate end-to-end

Timeouts should nest: outer customer-facing deadline > inner dependency budget. If inner calls sum near the outer limit, you only measure cascading cancelation storms.

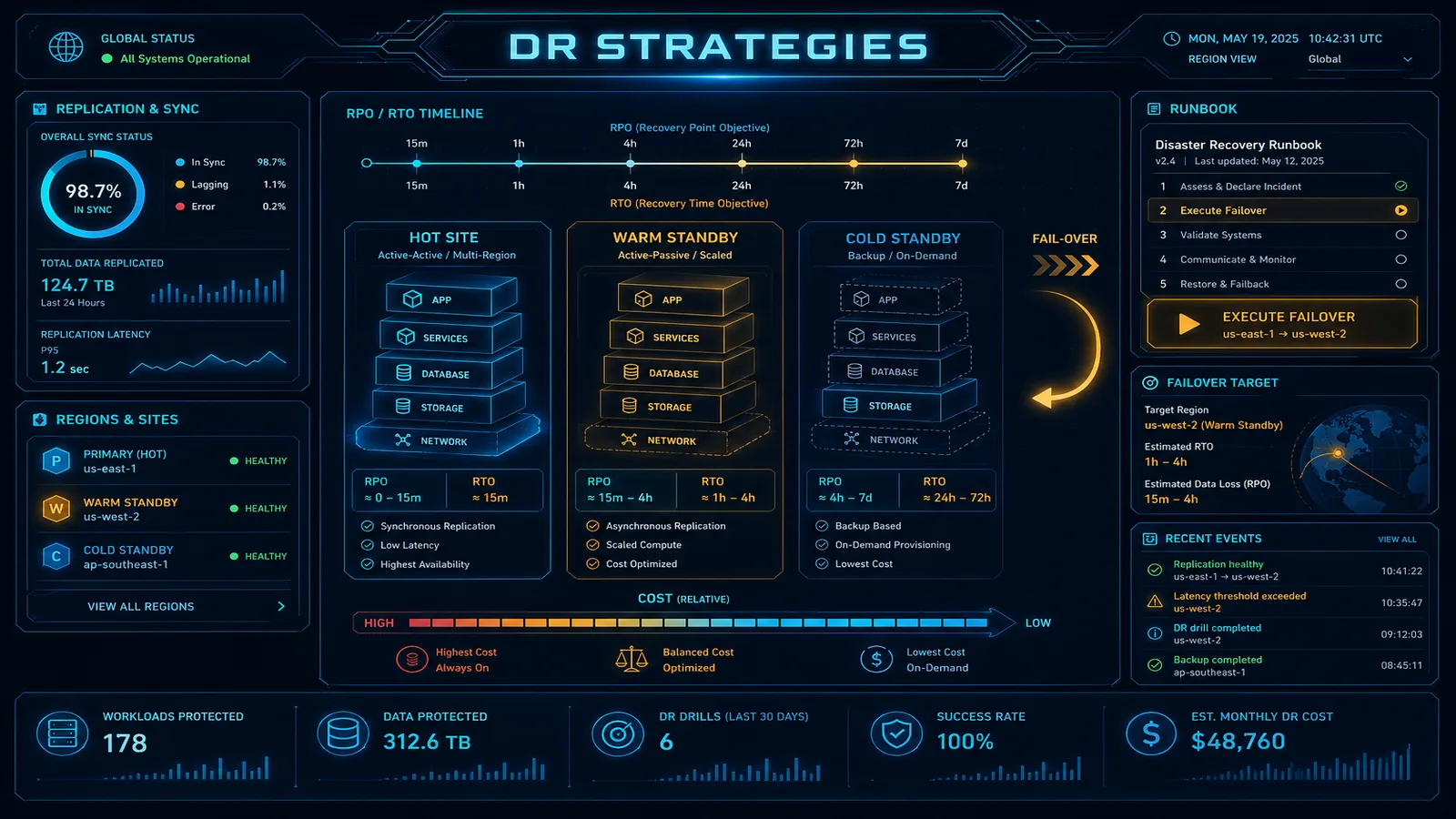

ECS / ALB: align target group deregistration delay with connection drain so new tasks accept traffic before old tasks lose membership. Failure to align produces 502 spikes during deploys—the same class of incident we discuss across disaster recovery thinking when rehearsing failover choreography.

Retries: exponential backoff + full jitter

AWS SDKs expose retry modes (legacy, standard, adaptive in AWS SDK for JavaScript v3)—know your defaults per runtime. Supplement with application-level idempotency keys for mutating routes.

Opinionated take — Prefer full jitter for thundering herd mitigation on user-visible retries; reserve deterministic backoff only when replays must be auditable bit-for-bit (rare).

Circuit breaking without lying to dashboards

Implement breakers at egress clients (HTTP to partners, cross-region calls) with:

- Open state duration tied to dependency recovery SLAs.

- Half-open probes limited to canary traffic or synthetic checks, not full user blast.

Without half-open discipline, you flip-flop.

Graceful shutdown: Lambda vs containers

Lambda: long CPU work should checkpoint externally; assume invocations can end between batches.

ECS/Fargate: respect stopTimeout in task definitions; ensure your process traps SIGTERM, stops accepting new socket accepts, and drains in-flight requests before exit.

What broke — A Node service swallowed SIGTERM and exited immediately; ALB still forwarded requests to draining tasks for 20s. Clients saw bursty 502s exactly during happy-hour deploys. Fix: HTTP server

close()+ health endpoint flip + deregistration delay aligned to measured drain time.

Pair with orchestration

If retries span multiple services with compensations, model the saga in Step Functions instead of embedding three nested retry policies in Lambda.

What This Post Doesn’t Cover

- Chaos engineering catalog execution—pair with observability drills separately.

- Network partitions inside VPC peers—requires topology-specific MTU and path diagnostics.

If You Only Do One Thing

Log retry attempt count, breaker state, and deadline budget remaining per outbound call—without those fields, postmortems stay astrology.

What to Do This Week

- Grep code for

maxAttempts/retryblocks; ensure each mutating call has idempotency keys in DynamoDB or a token table. - Validate ECS stopTimeout ≥ measured graceful shutdown + ALB deregistration padding.

- Add dashboards for AWS SDK throttle counters (

ThrottlingException) next to downstream p95.

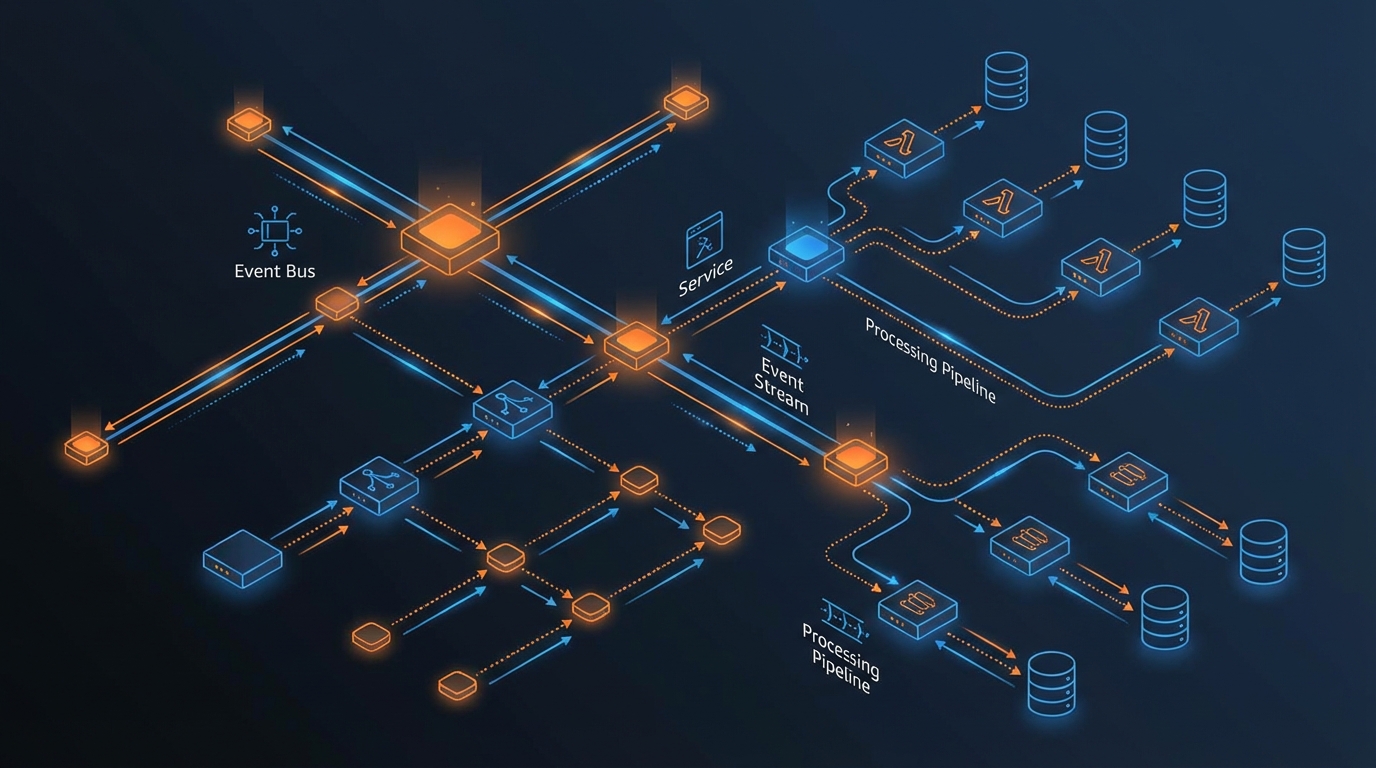

Cross-link: async absorption patterns in event-driven boundaries.

AWS Cloud Architect & AI Expert

AWS-certified cloud architect and AI expert with deep expertise in cloud migrations, cost optimization, and generative AI on AWS.