Distributed Data on AWS: Transactions, Aurora Failover Behavior, DynamoDB Partitions, and Shard-Like Aurora Limitless

Quick summary: Aurora storage replication is cross-AZ by design; writer failover targets typically complete in tens of seconds—plan application timeouts above that window or you ship self-inflicted outage amplification every failover drill.

Key Takeaways

- This note ties OLTP transactions on RDS/Aurora to partitioned access patterns on DynamoDB and shard-like scaling with Aurora Limitless—without pretending you can SD-WAN CAP theorem away

- Failure mode: ORM chatter opens transactions across multiple user requests, pinning connections and bloating undo volume—p95 query time climbs while CPU looks “fine

- What broke — A service using a 10s global HTTP client timeout talked to RDS through a proxy that surfaced failover as connection reset

- DynamoDB partitions and hot keys DynamoDB scales partitions, but your access patterns still dictate heat

- Single-key fan-in (global counters, one ) becomes a physics problem—see design guidance in single-table DynamoDB patterns

Table of Contents

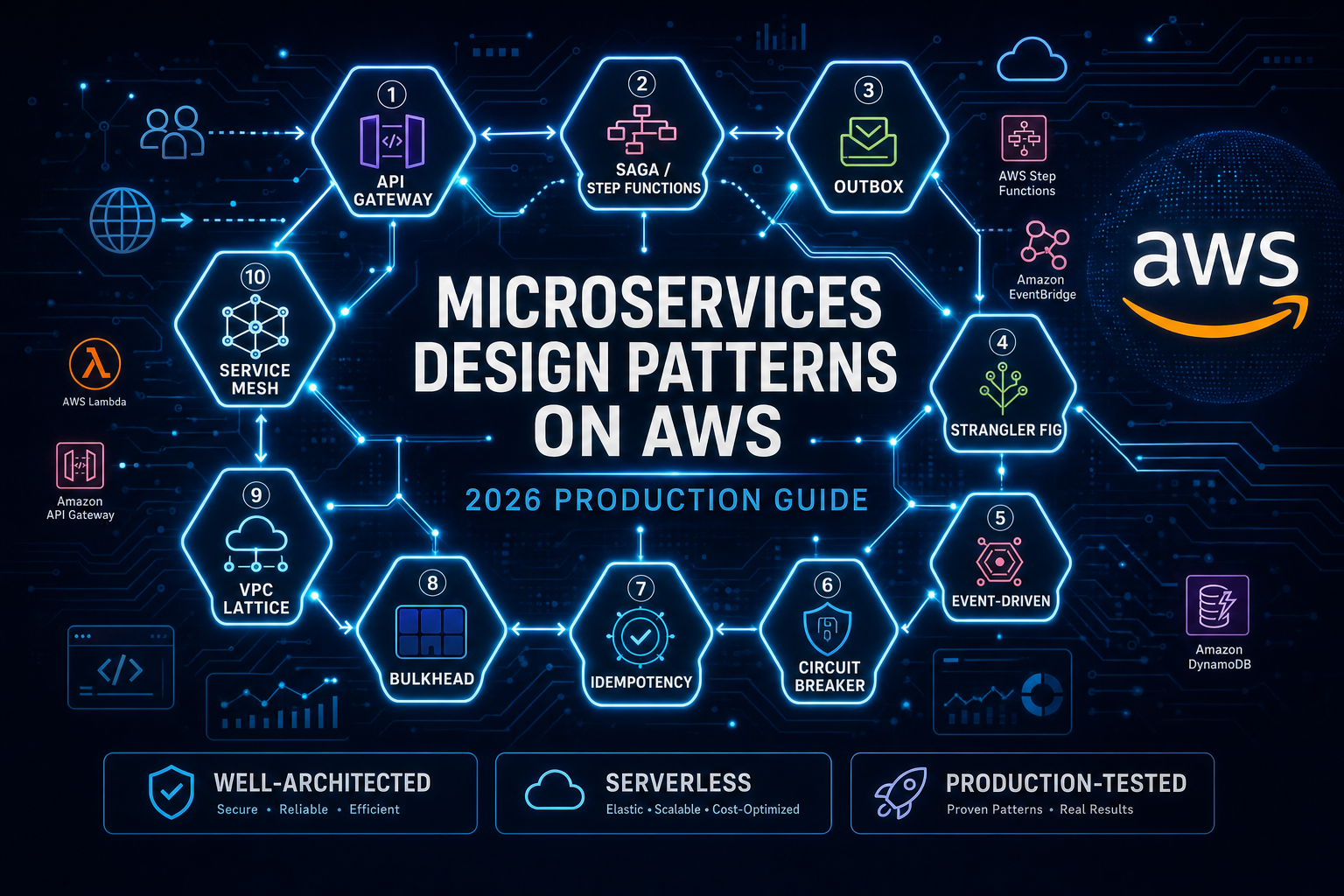

On May 8, 2026, “distributed systems on AWS” still collapses into three buyer problems: partial failure visibility, data plane timeout alignment, and write skew when you pretend relational transactions stretch infinitely across managed services.

This note ties OLTP transactions on RDS/Aurora to partitioned access patterns on DynamoDB and shard-like scaling with Aurora Limitless—without pretending you can SD-WAN CAP theorem away.

Reproduce this — Partition back-of-envelope worksheet:

examples/architecture-blog-2026/data-at-scale/partition-math.md

Transactions: keep them short and honest

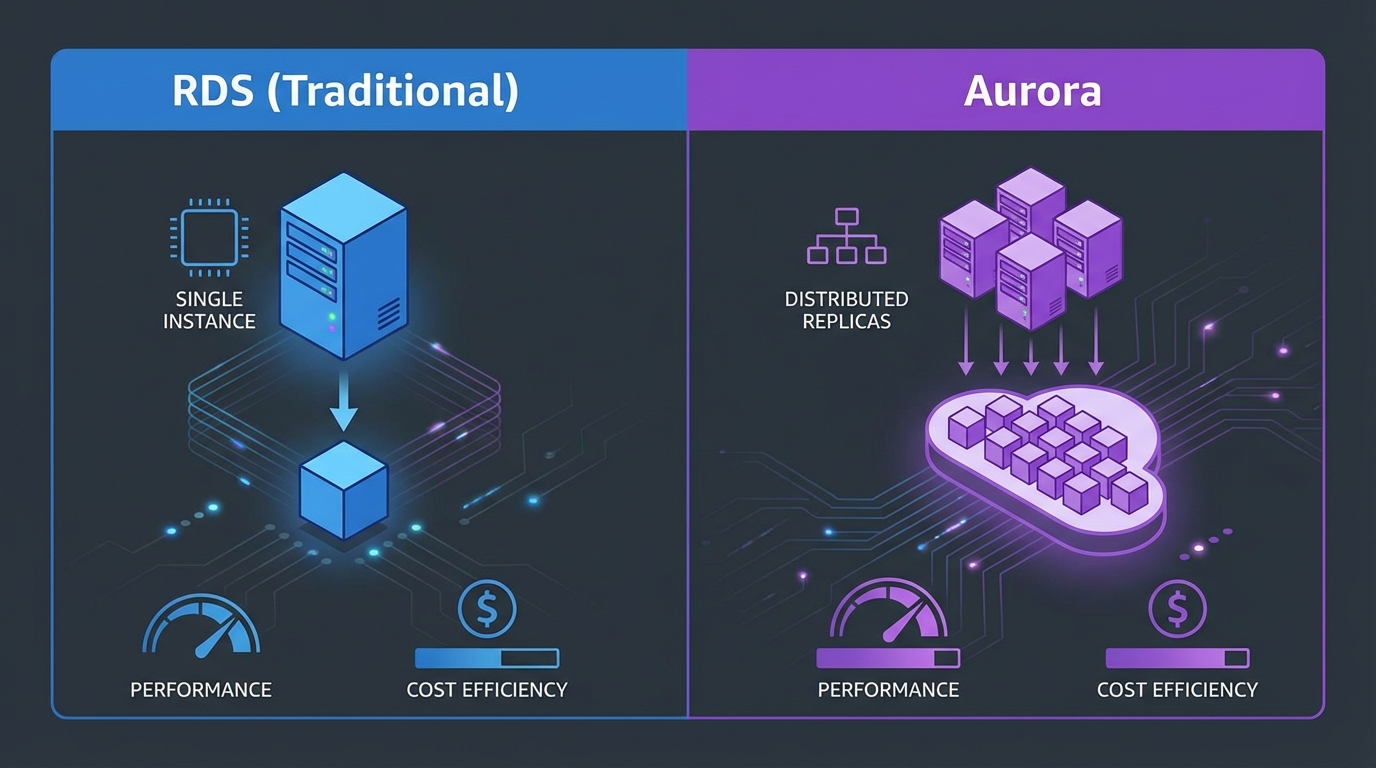

RDS/Aurora transactions buy ACID within the instance (with the isolation level you actually configured—not the default you forgot).

Failure mode: ORM chatter opens transactions across multiple user requests, pinning connections and bloating undo volume—p95 query time climbs while CPU looks “fine.”

Pair operational tuning with RDS performance best practices.

Aurora failover is not a zero-downtime checkbox

Aurora promotes a new writer after failures or planned failovers. Application stacks must:

- Retry DNS / endpoint resolution with backoff (drivers differ).

- Set TCP and JDBC timeouts above expected failover windows (historically often on the order of tens of seconds—validate with your version and observe in drills, do notcargo-cult).

What broke — A service using a 10s global HTTP client timeout talked to RDS through a proxy that surfaced failover as connection reset. Half the pods black-holed requests; Kubernetes restarted them, amplifying reconnect storms. Fix: aligned timeouts, proxy pool caps, and exponential backoff on connection acquisition.

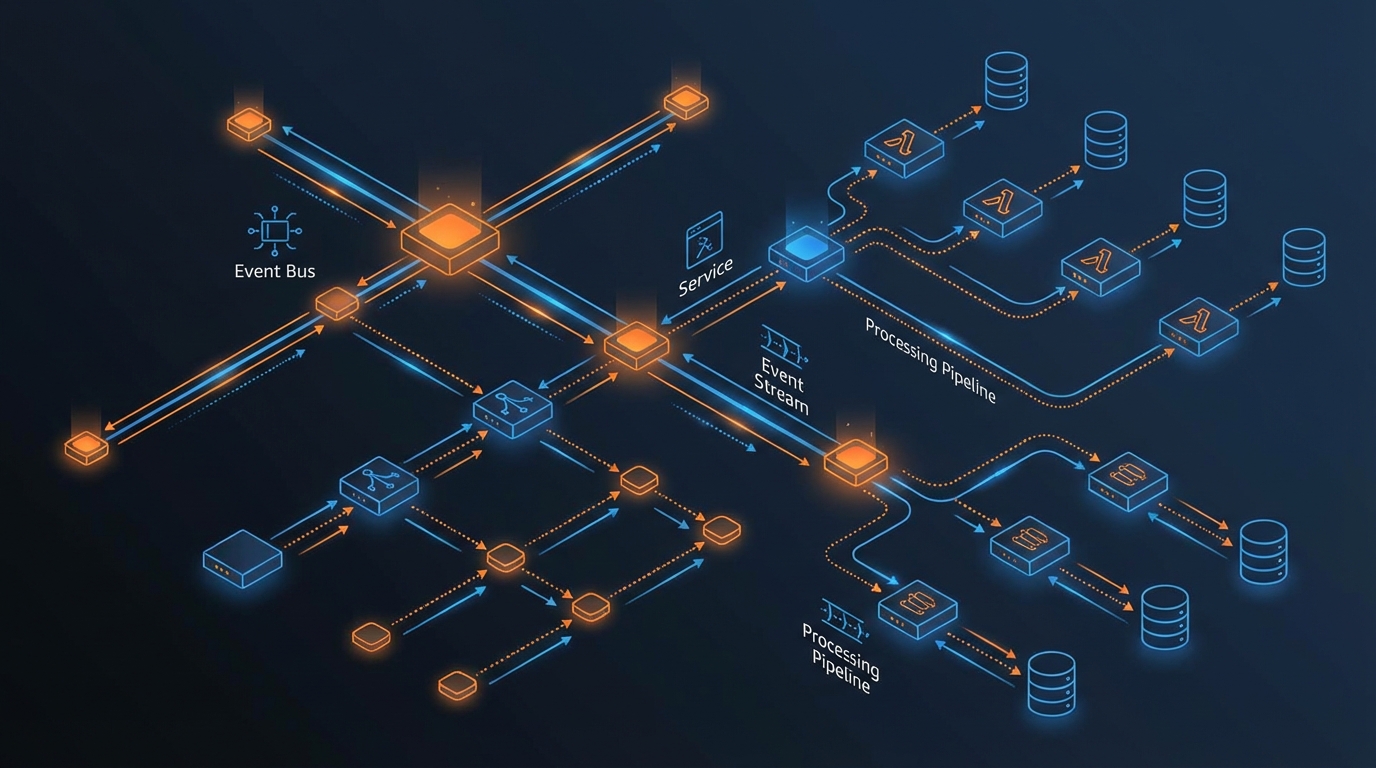

DynamoDB partitions and hot keys

DynamoDB scales partitions, but your access patterns still dictate heat. Single-key fan-in (global counters, one tenantId) becomes a physics problem—see design guidance in single-table DynamoDB patterns.

Opinionated take — If your partition key equals

DATEonly, you did not design a database; you designed a bottleneck with a calendar aesthetic.

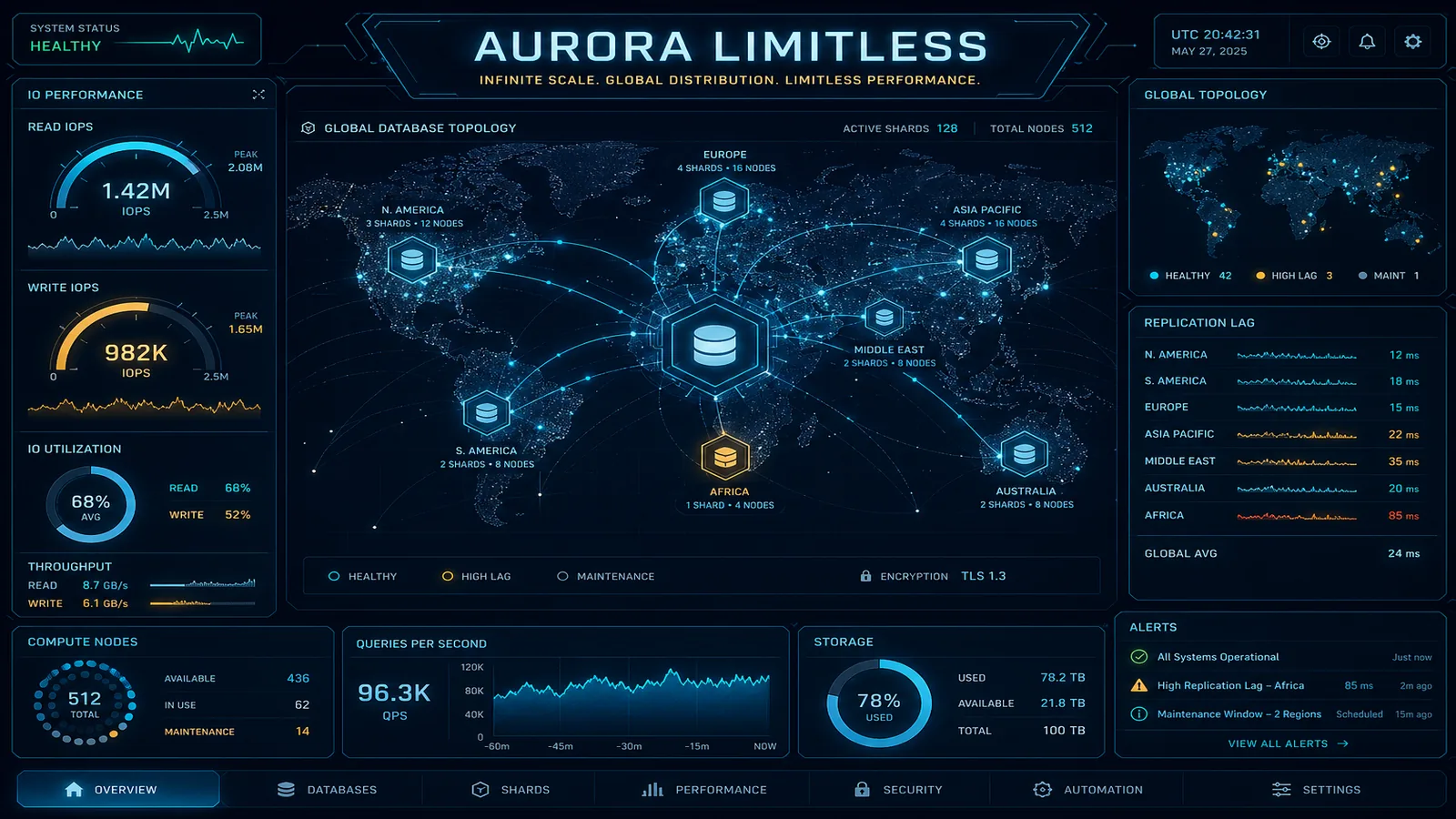

Aurora Limitless: shard-like without hand-rolled Vitess

For the AWS-native horizontal OLTP story, read Amazon Aurora Limitless Database before hiring a sharding committee—then decide whether your workload truly demands that operational surface.

MongoDB document stores when relational models fight you

Some domains map cleanly to documents and aggregation pipelines—evaluate costs and ops on AWS in MongoDB scalable cost guidance.

Streaming partitions as analogy

Kinesis and MSK partition keys behave like DynamoDB hot keys—ordering and throughput guarantees anchor on the key you pick. If you ingest ordered events, our Kinesis vs MSK guide helps pick the right streaming plane before mirroring partition mistakes into two systems.

What This Post Doesn’t Cover

- Analytics warehouses (Redshift, Iceberg lakehouses)—different latency and consistency contracts entirely.

- Multi-region active-active data planes—start from multi-region cost design before promising caps.

If You Only Do One Thing

Plot p99 transaction duration and connection pool utilization on one chart—if they diverge, you are hiding lock contention behind average CPU.

What to Do This Week

- Run a documented failover drill in staging; capture DNS/TTL and client retry behavior—not just database health.

- List top 20 DynamoDB access patterns; underline any key with >20% of consumed capacity.

- Revisit ORM transaction boundaries; grep

@Transactional(or equivalents) crossing network I/O.

If messaging absorbs overloaded writes, link to event-driven boundaries.

AWS Cloud Architect & AI Expert

AWS-certified cloud architect and AI expert with deep expertise in cloud migrations, cost optimization, and generative AI on AWS.