Amazon S3 Vectors: Native Vector Storage Without a Separate Vector Database

Quick summary: Amazon S3 Vectors eliminates the dedicated vector database for many RAG workloads. We compare it to OpenSearch Serverless and MemoryDB and show when each wins.

Key Takeaways

- Amazon S3 Vectors eliminates the dedicated vector database for many RAG workloads

- We compare it to OpenSearch Serverless and MemoryDB and show when each wins

- Amazon S3 Vectors eliminates the dedicated vector database for many RAG workloads

- We compare it to OpenSearch Serverless and MemoryDB and show when each wins

Table of Contents

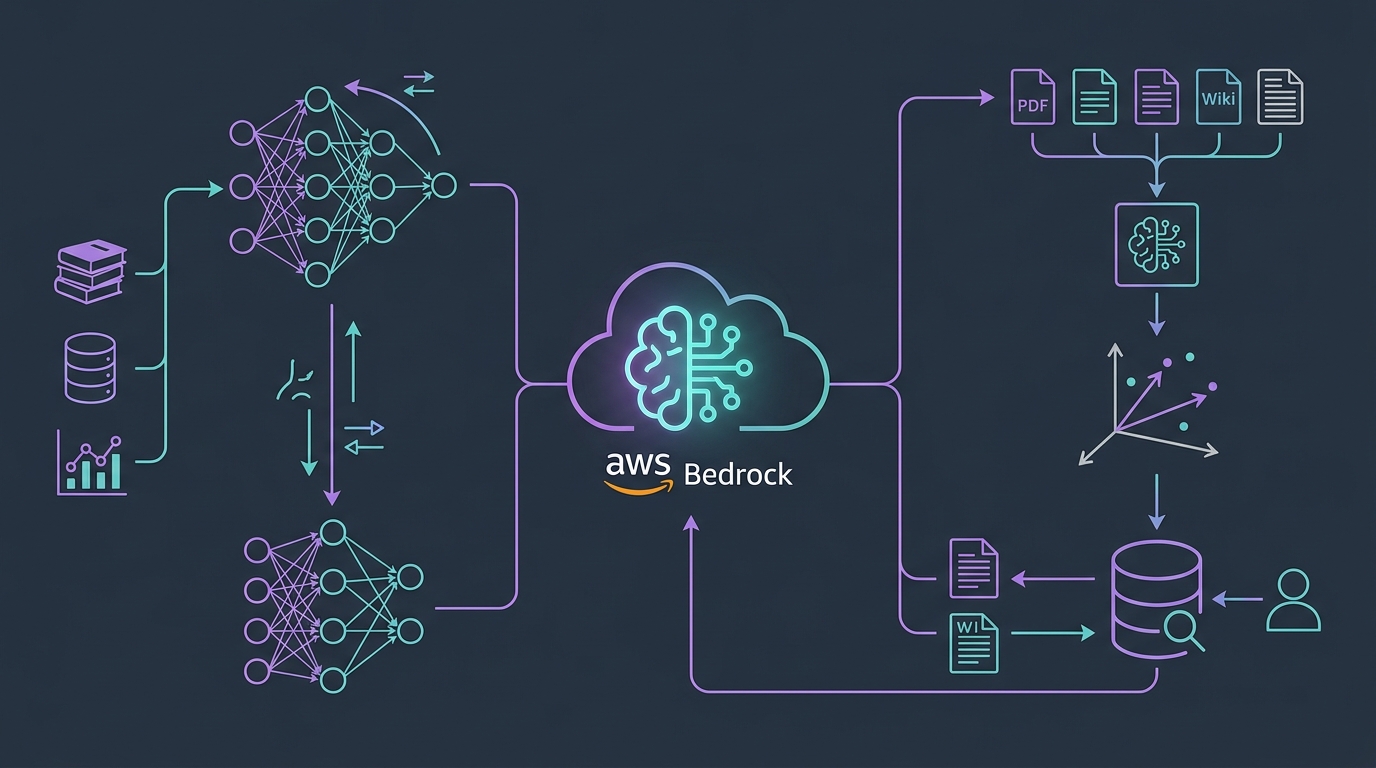

Every RAG architecture needs a place to store vectors. Until 2025, that meant provisioning a dedicated vector database: OpenSearch Serverless, Pinecone, Weaviate, Qdrant, or pgvector on Aurora PostgreSQL. Each option adds a separately managed infrastructure component with its own scaling model, cost structure, access control configuration, and operational runbook.

For many enterprise RAG workloads — document Q&A, knowledge base search, contract analysis, internal search over company data — a dedicated vector database is infrastructure overhead that the workload cannot justify. The query volume is moderate, the latency requirement is seconds (not milliseconds), and the corpus is relatively stable.

Amazon S3 Vectors eliminates the dedicated vector database for those workloads. It adds a native vector storage primitive to S3 — a vector bucket — with approximate nearest-neighbor query support. No separate cluster to provision. No separate service to monitor. Pricing is per GB stored and per query, not per compute unit.

This guide covers the S3 Vectors architecture, the precise trade-offs versus OpenSearch Serverless and MemoryDB, a complete RAG pipeline implementation, and a cost comparison at three scales.

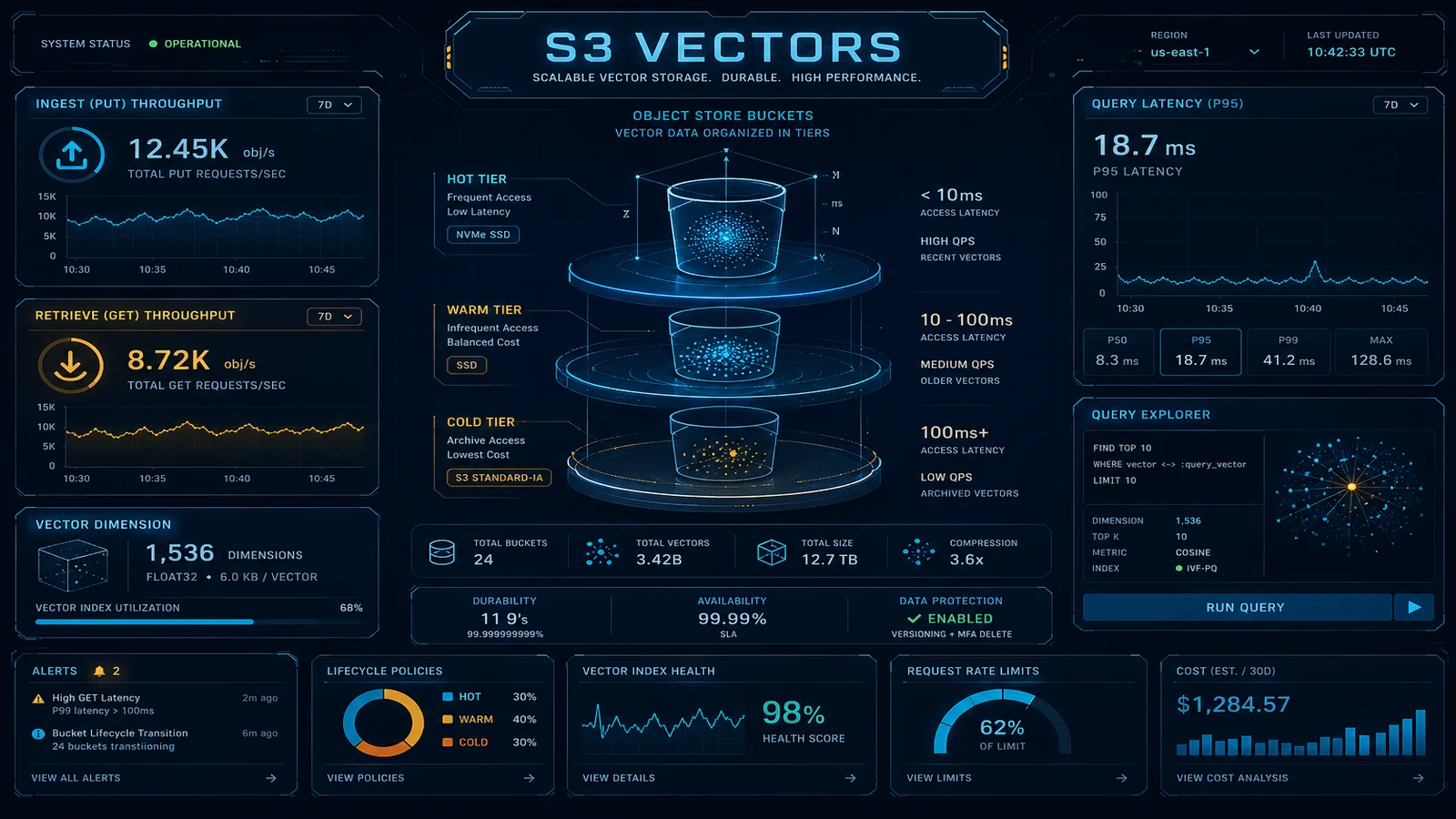

S3 Vectors Architecture

S3 Vectors introduces two new S3 constructs: the vector bucket and the vector object.

A vector bucket is a dedicated S3 bucket type for vector storage. You create it like a standard S3 bucket, but it is a distinct resource type with a separate API. Vector buckets support a defined embedding dimension (you specify this at creation time and it applies to all vectors in the bucket), a distance metric (cosine similarity, dot product, or Euclidean L2), and an index type.

A vector object is a single stored vector with associated metadata:

- Vector ID: a string key, analogous to an S3 object key

- Embedding: a float32 array with the dimension matching the bucket’s configured dimension

- Metadata: a JSON object with arbitrary key-value pairs for filtering

- Payload: optional binary data (raw document text, source URL, or any application-specific bytes)

The index types available:

- HNSW (Hierarchical Navigable Small World): approximate nearest-neighbor with configurable

ef_constructionandmparameters. This is the default and is appropriate for almost all production use cases. - FLAT: exact k-NN search. Accurate but scales O(n) with corpus size — only appropriate for very small corpora (under 100K vectors) where exact results are required.

Creating a vector bucket and inserting vectors:

import boto3

import json

s3vectors = boto3.client('s3vectors')

# Create a vector bucket configured for 1024-dimension embeddings

# with cosine similarity (standard for text embeddings)

s3vectors.create_vector_bucket(

vectorBucketName='enterprise-knowledge-vectors',

vectorBucketConfiguration={

'indexConfig': {

'hnsw': {

'dimensions': 1024,

'distanceMetric': 'cosine',

'efConstruction': 512,

'm': 16

}

}

}

)

# Store vectors in batch (up to 500 per call)

s3vectors.put_vectors(

vectorBucketName='enterprise-knowledge-vectors',

vectors=[

{

'key': 'doc_001_chunk_0',

'data': {

'float32': [0.0234, -0.1872, 0.4451, ...] # 1024 floats

},

'metadata': {

'document_id': 'doc_001',

'source': 's3://company-docs/policies/expense-policy.pdf',

'chunk_index': 0,

'document_type': 'policy',

'department': 'finance',

'last_updated': '2026-03-15'

}

},

# ... up to 499 more vectors per batch call

]

)Querying vectors:

# Query with metadata pre-filter

response = s3vectors.query_vectors(

vectorBucketName='enterprise-knowledge-vectors',

queryVector={

'float32': [0.0891, -0.2341, 0.3892, ...] # Query embedding

},

topK=10,

filter={

'metadata': {

'department': {'$eq': 'finance'},

'document_type': {'$in': ['policy', 'guideline']}

}

},

returnMetadata=True,

returnData=False # Only retrieve metadata, not the raw payload bytes

)

for result in response['vectors']:

print(f"Key: {result['key']}")

print(f"Score: {result['score']}")

print(f"Source: {result['metadata']['source']}")The metadata filter runs before the ANN search, not after. This is important for performance: filtering first reduces the search space, which improves both result quality and query latency.

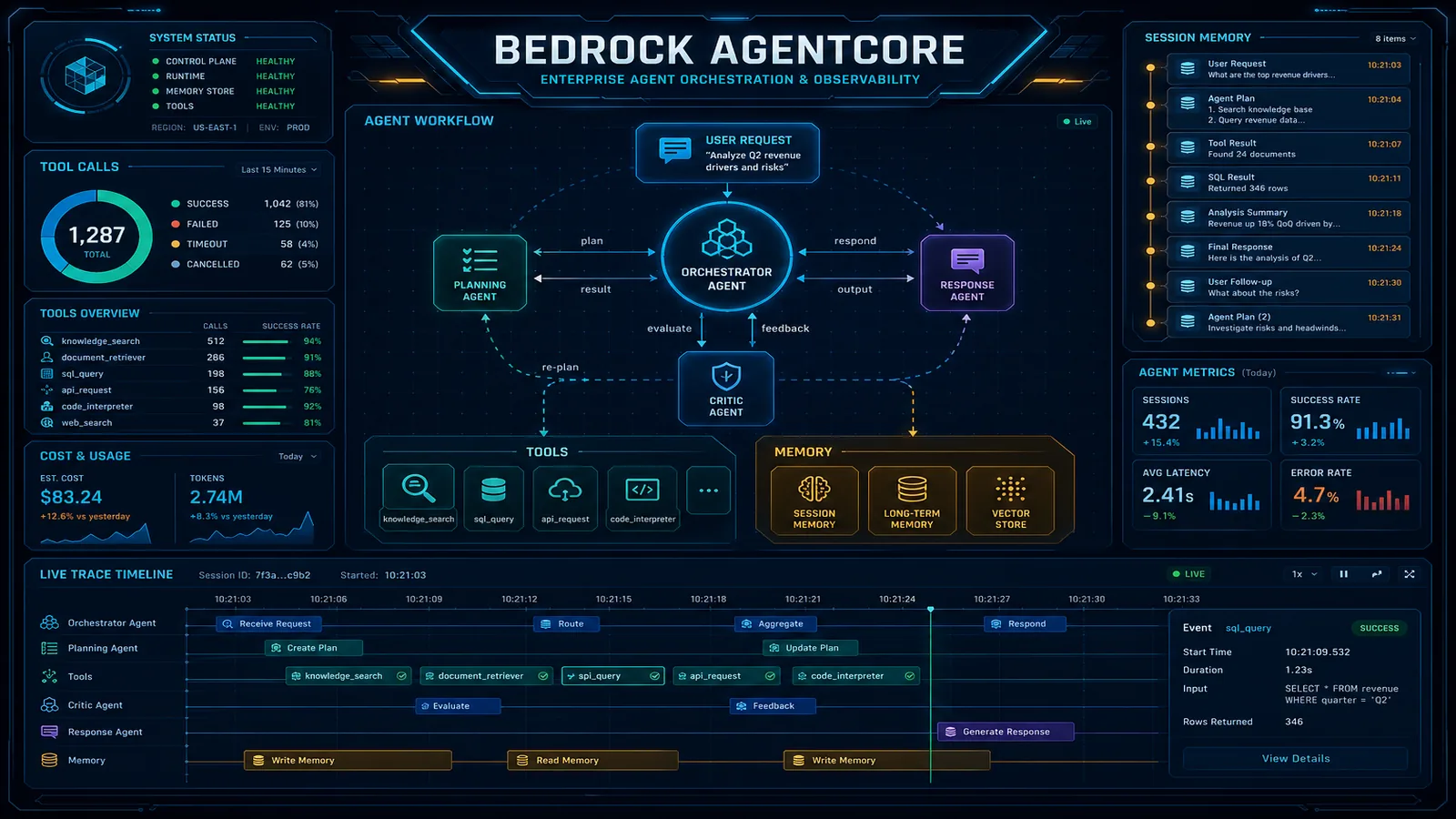

S3 Vectors vs. OpenSearch Serverless vs. MemoryDB

The right vector store depends on three primary axes: latency requirement, query volume, and whether you need hybrid search (vector + keyword BM25).

| Dimension | S3 Vectors | OpenSearch Serverless | MemoryDB with Vector Search |

|---|---|---|---|

| Query latency (P50) | 50–200ms | 5–20ms | under 5ms (in-memory) |

| Query latency (P99) | 200–800ms | 20–50ms | 10–20ms |

| Minimum monthly cost | ~$0 (pay per use) | ~$700 (2 OCU minimum) | ~$200 (1 node) |

| Cost at 10M vectors, 1K QPM | ~$15–30/month | ~$700–1,000/month | ~$400–600/month |

| Hybrid search (vector + BM25) | No | Yes (k-NN + BM25 together) | No |

| Real-time vector updates | Yes (eventual consistency) | Yes (near-real-time) | Yes (immediate, in-memory) |

| Metadata filtering | Yes (pre-filter) | Yes (pre and post-filter) | Yes (pre-filter) |

| Bedrock Knowledge Bases integration | Yes (native) | Yes (native) | Yes (native) |

| HNSW index tuning | Limited | Extensive | Limited |

| Cluster management | None | Minimal (serverless) | None (managed service) |

| Best fit | Cost-sensitive RAG, batch retrieval, moderate QPS | Hybrid search, high QPS, existing OpenSearch investment | Real-time RAG, session-scoped retrieval, under 5ms required |

The latency numbers deserve context. For a user interacting with a RAG chatbot, the end-to-end response time has three components: embedding generation (100–300ms for Bedrock Titan Embeddings v2), vector retrieval, and model generation (1–5 seconds for a Claude response). A 100ms vector retrieval time versus a 10ms retrieval time is a 90ms difference in a total response time of 1.5–6 seconds — perceptible but rarely the deciding factor for user experience.

The cost difference at low-to-moderate query volume is the deciding factor for most enterprises. OpenSearch Serverless’s 2-OCU minimum means you are paying ~$700/month before your first query. S3 Vectors scales to zero when idle.

Where OpenSearch Serverless wins: workloads that require hybrid search (combining dense vector similarity with sparse BM25 keyword matching for better recall on specific terminology), workloads with high sustained QPS (>500 queries/minute), and teams that already have OpenSearch Serverless in their stack for search use cases.

Where MemoryDB wins: real-time retrieval with sub-5ms requirement, session-scoped RAG where the vector index changes rapidly (live document editing, session-specific context injection), and workloads where the vector index must be durably consistent with transactional data in the same Redis keyspace.

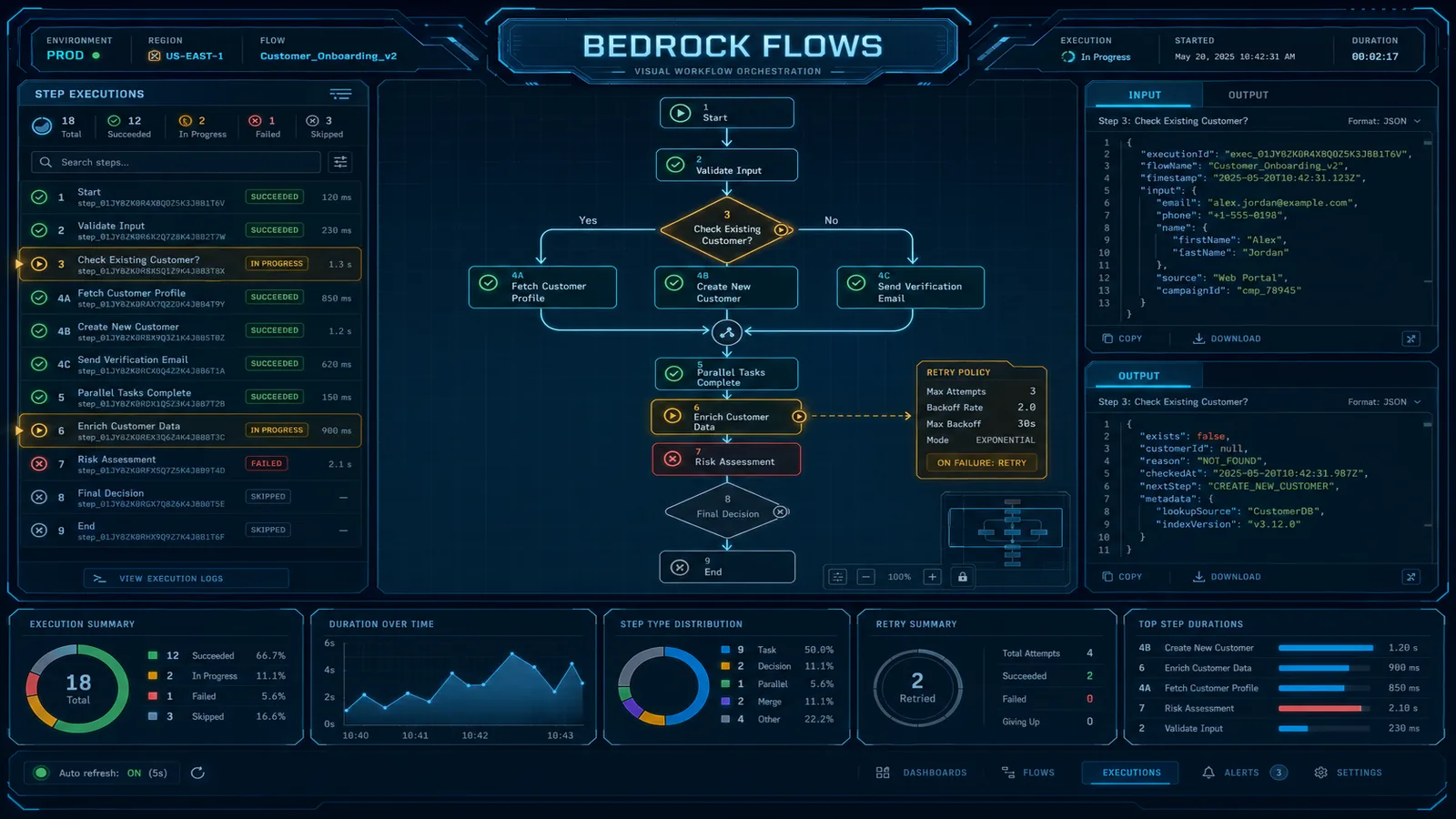

RAG Pipeline with S3 Vectors

End-to-end RAG pipeline using S3 Vectors as the knowledge store, Bedrock Titan Embeddings v2 for embedding generation, and Claude on Bedrock for generation:

import boto3

import json

from typing import Optional

# Initialize clients

bedrock_runtime = boto3.client('bedrock-runtime', region_name='us-east-1')

s3vectors = boto3.client('s3vectors', region_name='us-east-1')

VECTOR_BUCKET = 'enterprise-knowledge-vectors'

EMBEDDING_MODEL = 'amazon.titan-embed-text-v2:0'

GENERATION_MODEL = 'anthropic.claude-3-5-sonnet-20241022-v2:0'

def generate_embedding(text: str) -> list[float]:

"""Generate embedding using Bedrock Titan Embeddings v2."""

response = bedrock_runtime.invoke_model(

modelId=EMBEDDING_MODEL,

body=json.dumps({

'inputText': text,

'dimensions': 1024,

'normalize': True

})

)

body = json.loads(response['body'].read())

return body['embedding']

def retrieve_context(

query: str,

top_k: int = 5,

metadata_filter: Optional[dict] = None

) -> list[dict]:

"""Retrieve relevant document chunks from S3 Vectors."""

query_embedding = generate_embedding(query)

query_params = {

'vectorBucketName': VECTOR_BUCKET,

'queryVector': {'float32': query_embedding},

'topK': top_k,

'returnMetadata': True,

'returnData': True # Return the stored chunk text in payload

}

if metadata_filter:

query_params['filter'] = {'metadata': metadata_filter}

response = s3vectors.query_vectors(**query_params)

return [

{

'text': result['data']['string'], # Assuming text stored as payload

'source': result['metadata'].get('source', 'unknown'),

'score': result['score'],

'document_id': result['metadata'].get('document_id')

}

for result in response['vectors']

]

def rag_query(

user_question: str,

metadata_filter: Optional[dict] = None

) -> str:

"""Full RAG pipeline: retrieve context then generate answer."""

# Step 1: Retrieve relevant context

context_chunks = retrieve_context(

query=user_question,

top_k=5,

metadata_filter=metadata_filter

)

# Step 2: Build prompt with retrieved context

context_text = '\n\n'.join([

f"[Source: {chunk['source']}]\n{chunk['text']}"

for chunk in context_chunks

if chunk['score'] > 0.7 # Filter low-confidence retrievals

])

prompt = f"""You are an enterprise knowledge assistant. Answer the question based

on the provided context. If the context does not contain enough information to

answer confidently, say so explicitly.

Context:

{context_text}

Question: {user_question}

Answer:"""

# Step 3: Generate answer with Claude

response = bedrock_runtime.invoke_model(

modelId=GENERATION_MODEL,

body=json.dumps({

'anthropic_version': 'bedrock-2023-05-31',

'max_tokens': 1024,

'messages': [{'role': 'user', 'content': prompt}]

})

)

body = json.loads(response['body'].read())

return body['content'][0]['text']

# Usage example

answer = rag_query(

user_question='What is the approval process for expenses over $5,000?',

metadata_filter={

'document_type': {'$eq': 'policy'},

'department': {'$in': ['finance', 'hr']}

}

)The metadata filter {'document_type': {'$eq': 'policy'}} runs before the ANN search, limiting the search space to policy documents only. This both improves result relevance and reduces query latency by reducing the number of vectors the HNSW algorithm must traverse.

For the ingestion side — chunking documents, generating embeddings, and writing to S3 Vectors — the standard pattern uses a Lambda function triggered by S3 Object Created events, chunking documents into 512–1024 token segments with 10% overlap, generating embeddings via Bedrock Titan, and calling PutVectors in batches of 500.

Performance Characteristics

The 50–200ms query latency figure for S3 Vectors breaks down into two components: network round-trip time (~10–20ms for an in-region call) and index search time (40–180ms depending on corpus size and HNSW parameters).

At 10M vectors with default HNSW parameters (ef_construction=512, m=16), expect P50 query latency around 80ms and P99 around 250ms. At 100M vectors, expect P50 around 130ms and P99 around 400ms. These are approximate — actual latency depends on your HNSW configuration and the distribution of your vectors.

Metadata filter impact: Pre-filters that eliminate >90% of vectors before ANN search can paradoxically increase latency for HNSW, because the reduced candidate set forces HNSW to explore more of the graph to find k neighbors. This is a known property of HNSW with aggressive pre-filtering. If your workload uses aggressive metadata filters on a large corpus, add a buffer to your top_k value (request 15 when you need 5) and filter post-retrieval by score threshold.

Throughput: S3 Vectors supports up to 500 PutVectors calls per second per bucket for writes and 200 QueryVectors calls per second per bucket for reads, with burst capacity above these limits. For workloads approaching these limits, the standard pattern is bucket sharding: distribute vectors across multiple buckets by a hash of the document ID, query all buckets in parallel, and merge results client-side.

Eventual consistency for writes: PutVectors is eventually consistent — a vector written may not be immediately visible to subsequent queries. The typical propagation delay is 1–5 seconds. For most RAG workloads processing ingested documents that were created minutes or hours ago, this is not a concern. For real-time use cases where a document is ingested and queried within seconds (live document annotation, real-time knowledge injection), use MemoryDB instead.

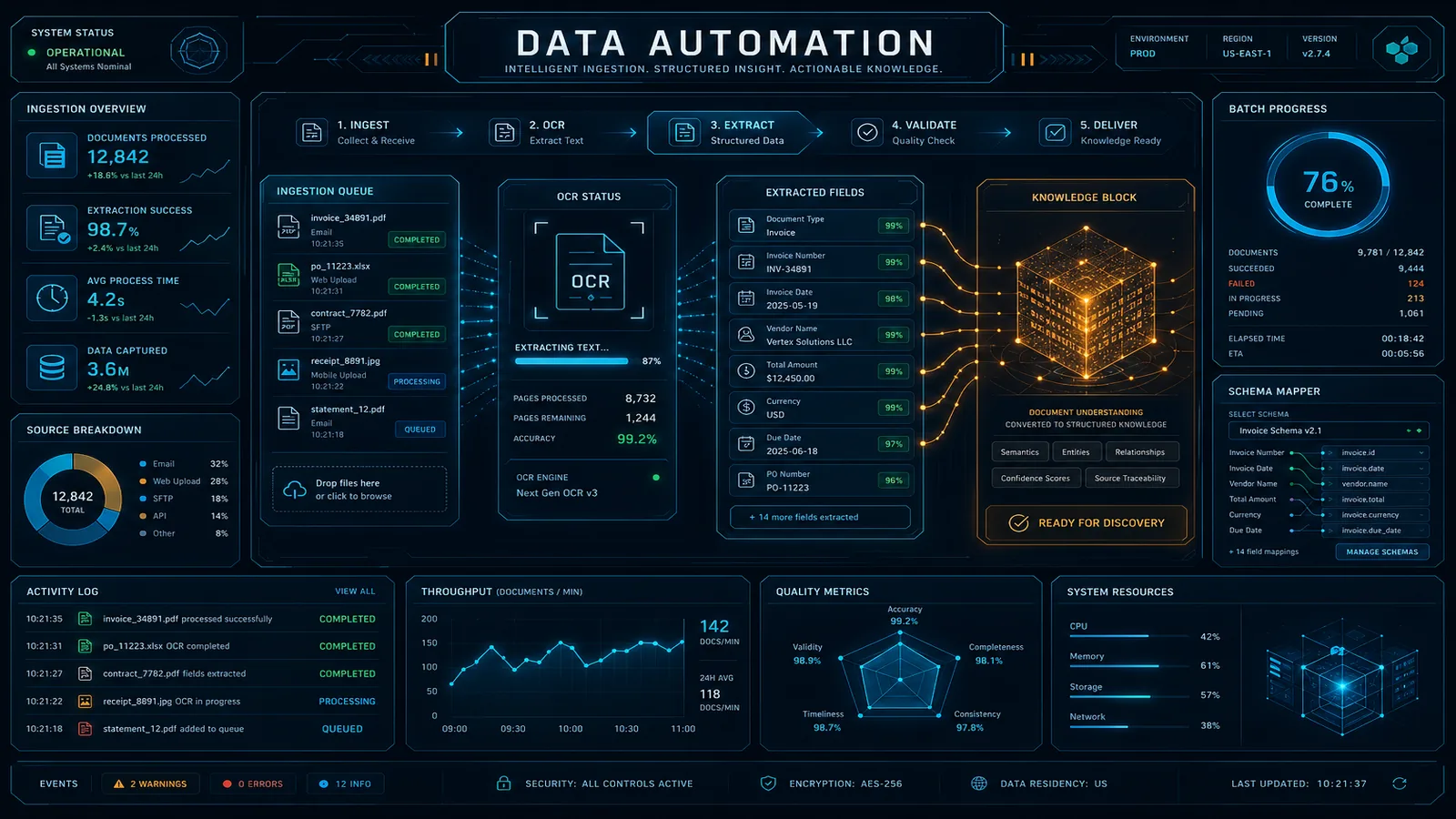

Cost Comparison at Scale

S3 Vectors pricing has two components: storage (per GB/month for vectors + metadata) and query (per QueryVectors API call). A 1024-dimension float32 vector occupies approximately 4KB including metadata and overhead.

Cost at 10M vectors, 100K queries/month:

| Service | Storage Cost | Query Cost | Total/Month |

|---|---|---|---|

| S3 Vectors | ~$40 (10M × 4KB = 40GB) | ~$5 (100K queries) | ~$45 |

| OpenSearch Serverless | ~$700 (2 OCU minimum) | Included in OCU | ~$700 |

| MemoryDB (r6g.large) | ~$200 (instance) | Included | ~$200 |

Cost at 100M vectors, 1M queries/month:

| Service | Storage Cost | Query Cost | Total/Month |

|---|---|---|---|

| S3 Vectors | ~$400 (400GB storage) | ~$50 (1M queries) | ~$450 |

| OpenSearch Serverless | ~$1,400 (4 OCUs for index size) | Included | ~$1,400 |

| MemoryDB (r6g.2xlarge ×2) | ~$900 (2 nodes for 100M vectors) | Included | ~$900 |

Cost at 1B vectors, 10M queries/month:

| Service | Storage Cost | Query Cost | Total/Month |

|---|---|---|---|

| S3 Vectors | ~$4,000 (4TB storage) | ~$500 (10M queries) | ~$4,500 |

| OpenSearch Serverless | ~$8,000+ (20+ OCUs) | Included | ~$8,000+ |

| MemoryDB | Not practical (memory cost too high) | — | ~$15,000+ |

The crossover point where OpenSearch Serverless becomes cost-competitive with S3 Vectors is at high sustained QPS with hybrid search requirements — typically 500+ queries per minute with keyword+vector mixed queries. Below that threshold, S3 Vectors is materially cheaper for any corpus size.

For existing Bedrock Knowledge Base RAG patterns, see How to Build a RAG Pipeline with Amazon Bedrock Knowledge Bases. For OpenSearch architecture patterns and when OpenSearch Serverless is the right choice, see Amazon OpenSearch Service Architecture Patterns and Cost Optimization. For MemoryDB with Vector Search (the right choice for real-time retrieval), see Amazon MemoryDB Vector Search for AI Workloads. For where S3 Vectors fits in the broader 2026 AWS AI service landscape, see the Top 20 AWS AI Services guide.

Need help choosing between S3 Vectors, OpenSearch Serverless, and MemoryDB for your RAG architecture? FactualMinds has helped enterprise teams design vector storage strategies that balance latency, cost, and operational complexity — and we can run the cost model against your actual corpus size and query volume to give you a recommendation grounded in your specific workload rather than generic guidance. Reach out to scope an architecture review.

AWS Cloud Architect & AI Expert

AWS-certified cloud architect and AI expert with deep expertise in cloud migrations, cost optimization, and generative AI on AWS.