Amazon Bedrock Automated Reasoning Checks: Production Hallucination Prevention with Math-Validated Factuality

Quick summary: Bedrock Automated Reasoning checks ground LLM outputs against formal logic policies you encode and mathematically validate that the response is consistent with the policy. This guide covers when to use Automated Reasoning vs contextual grounding, how to author the policy in production, the integration with Bedrock Guardrails, and the regulated use cases (HR, insurance, eligibility, regulatory determinations) where the difference matters.

Key Takeaways

- Bedrock Automated Reasoning checks ground LLM outputs against formal logic policies you encode and mathematically validate that the response is consistent with the policy

- Bedrock Automated Reasoning checks ground LLM outputs against formal logic policies you encode and mathematically validate that the response is consistent with the policy

Table of Contents

Amazon Bedrock Automated Reasoning checks mathematically validate that an LLM response is consistent with a formal logic policy you encode. Where contextual grounding asks “did you cite something I gave you?”, Automated Reasoning asks “is your answer logically valid given my rules?” — and proves it with the same kind of formal-methods reasoning AWS uses for Provable Security on its own infrastructure.

The feature went GA at re:Invent 2024 and has been adopted across HR, insurance, healthcare, legal, and regulatory deployments where a hallucinated correct-sounding answer creates real liability. AWS reports ~99% validated-response accuracy on the public benchmark.

This guide is for AI engineers, product managers, and compliance leads building regulated AI applications on Bedrock. It covers when to use Automated Reasoning vs contextual grounding (or both), how to author the policy in production, the integration with Bedrock Guardrails, and the production patterns for HR / insurance / eligibility / regulatory determination workloads.

Need help deploying Bedrock Automated Reasoning in a regulated workload? FactualMinds runs AI compliance engagements that combine Guardrails, contextual grounding, Automated Reasoning, and human-in-the-loop review into a single defense-in-depth stack. See our Cyber-Led AI service or talk to our team.

Why “math-validated” matters

LLMs hallucinate. Most of the time the hallucinations are merely embarrassing; in regulated workflows they create liability. The standard mitigations:

- Prompt engineering — better instructions, few-shot examples, structured outputs. Reduces hallucination rate but does not eliminate it.

- Retrieval-augmented generation (RAG) — retrieve source documents, ask the LLM to answer from them. Grounded — but the LLM can still misread or paraphrase the source incorrectly.

- Contextual grounding checks (Bedrock Guardrails feature) — validate that the LLM output is corroborated by the retrieved source. Catches obvious “made it up” failures but does not catch logical errors that are corroborated yet wrong.

- Automated Reasoning checks — validate the LLM output against a formal logic policy. Catches logical errors that all the above miss, including correct-sounding answers that are wrong because they misapply a rule.

The unique value of Automated Reasoning: it gives you a soundness guarantee. When the policy validates a response, the response is provably consistent with the policy. Not “probably correct based on the training data” — provably correct given the rules you encoded. That is the standard regulators and risk teams want from determinations that affect benefits, eligibility, coverage, or rights.

When to use Automated Reasoning

The bright lines:

- Use Automated Reasoning when: the answer is a determination bounded by explicit rules, the rules can be formalized (eligibility matrices, policy documents, regulations, contracts), and a wrong answer carries liability.

- Use contextual grounding when: the answer is a paraphrase or summary of a source document, the question is answerable by retrieval, and the failure mode is “made it up.”

- Use both when: the workflow does both retrieval-augmented Q&A and rule-bounded determinations. Many regulated chatbots blend both.

- Use neither when: the workflow is creative or open-ended (writing, brainstorming, image generation) and does not need rule-validation.

Concrete use cases that win on Automated Reasoning:

- HR policy chatbots (parental leave, PTO accrual, severance, disability accommodation).

- Insurance underwriting Q&A (coverage decisions against policy terms).

- Healthcare benefits chatbots (eligibility for specific procedures, prior-authorization rules).

- Legal contract Q&A (clause applicability, party obligations, dispute interpretations).

- Tax and accounting Q&A (deductibility, transaction treatment, jurisdictional rules).

- Regulatory chatbots (product certification, disclosure requirements, license rules).

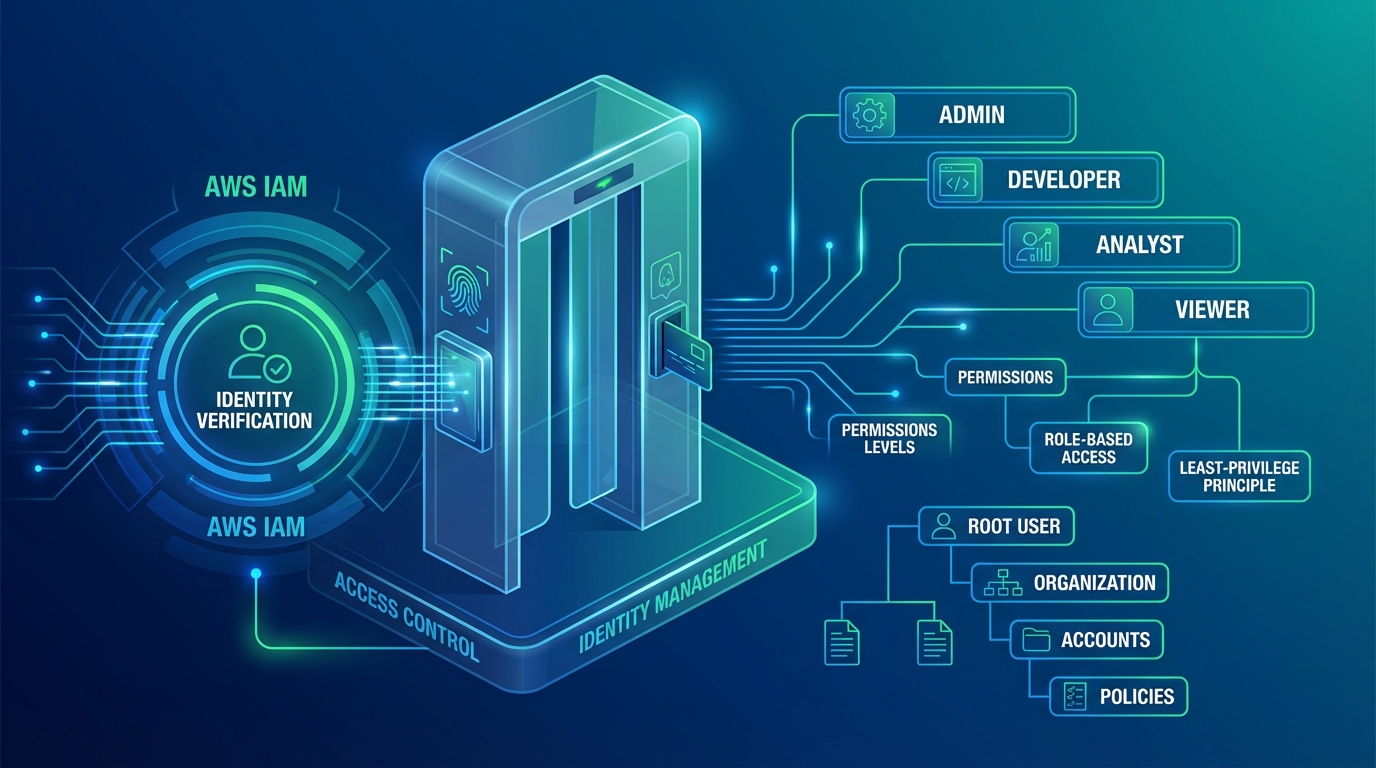

- Internal access-policy chatbots (resource authorization against the access matrix).

How the policy works

Automated Reasoning policies are formal logic representations of your rules. Authored components:

- Predicates — atomic facts (

is_employee_full_time,has_completed_six_months_service,region == "EU"). - Axioms — established truths (

region == "EU" => GDPR_applies). - Rules — conditional statements (

is_employee_full_time AND has_completed_six_months_service AND region == "EU" => parental_leave_eligible). - Variables and types — typed inputs (employee record, request context, time).

Authoring workflow:

- Source the rules — identify the canonical rules document (HR policy PDF, insurance contract, regulatory text). Make sure it is the latest version your legal/compliance team will stand behind.

- Use the Bedrock guided authoring — Bedrock Automated Reasoning provides a workflow that ingests the natural-language rules document and proposes a formal representation. A domain expert reviews and refines.

- Encode the predicates — what facts about the user, their record, and the question does the rule require?

- Encode the rules — translate “if-then” statements into formal logic.

- Test — run the policy against a corpus of known-correct and known-incorrect Q&A pairs. The policy should accept all known-correct and reject all known-incorrect.

- Edge-case sweep — generate adversarial variants of the questions (“what if the employee is part-time and the region is EU but service is 5 months?”) and verify the policy gives the right answer.

- Deploy — publish the policy version to Bedrock; reference it from the inference call.

Authoring effort: hours-to-days for a typical HR or insurance policy. The engineering effort is in the integration and the test corpus, not the encoding.

Integration pattern: defense in depth

A regulated production stack typically layers:

User prompt

↓

[Input Guardrail: PII redaction, denied topics, prompt injection check]

↓

LLM inference (Bedrock model)

↓

[Contextual grounding check] — is the response corroborated by the retrieved source?

↓

[Automated Reasoning check] — is the response consistent with the formal policy?

↓

[Output Guardrail: PII redaction, content filter, word filter]

↓

Response (or rejection / human escalation)Each layer catches a different failure mode:

- Input Guardrail catches malicious or out-of-scope input before inference.

- LLM produces a response.

- Contextual grounding catches “you made up a source citation.”

- Automated Reasoning catches “your answer contradicts rule X.”

- Output Guardrail catches “you produced PII or harmful content.”

When any layer rejects, the workflow either (a) returns a structured rejection (“I cannot determine that from policy”), (b) escalates to a human reviewer, or (c) re-prompts the LLM with the validation feedback to produce a corrected response. The choice depends on the workload — chat tends toward re-prompt; back-office determinations tend toward human escalation.

Code example: HR policy chatbot

import boto3

import json

bedrock = boto3.client('bedrock-runtime', region_name='us-east-1')

def hr_policy_query(question: str, employee: dict):

response = bedrock.invoke_model_with_response_stream(

modelId='anthropic.claude-sonnet-4-6',

guardrailIdentifier='gr-hr-policy-v3',

guardrailVersion='14',

body=json.dumps({

"anthropic_version": "bedrock-2023-05-31",

"max_tokens": 1024,

"system": "You are an HR policy assistant. Answer the user's question using ONLY the policy document and known facts about the employee.",

"messages": [{"role": "user", "content": [

{"type": "text", "text": f"Employee record: {json.dumps(employee)}\n\nQuestion: {question}"}

]}]

}),

# Automated Reasoning policy applied via Guardrail configuration

)

# Stream response, including Automated Reasoning validation result

for event in response['body']:

chunk = json.loads(event['chunk']['bytes'])

if chunk.get('type') == 'guardrail_validation':

if chunk['validation_result'] == 'rejected':

return {

'response': None,

'reason': chunk['rejection_reason'],

'escalate_to_human': True,

}

elif chunk.get('type') == 'content_block_delta':

yield chunk['delta']['text']The Automated Reasoning policy is configured as part of the Bedrock Guardrail definition — when the response stream completes, the guardrail evaluates the full response against the policy and returns a validation result.

Production patterns

- Test corpus. Build a corpus of 200-500 known Q&A pairs (with correct, incorrect, and edge-case answers). Run regression tests on the policy on every change.

- Policy versioning. Treat the Automated Reasoning policy like code — version controlled, reviewed before deployment, rolled back if regression. Bedrock supports policy versions natively.

- Human-in-the-loop for rejections. When Automated Reasoning rejects, route to a human reviewer with the rejection reason. The reviewer’s decision improves the next policy version.

- Observability. Log every check result. Track validation rates, rejection reasons, and policy-coverage gaps. Most policies have blind spots that show up only in production traffic.

- Document for audit. EU AI Act, NIST AI RMF, and HIPAA all want documented evidence that the AI system is risk-managed. The Automated Reasoning policy + the test corpus + the rejection logs are exactly that evidence.

- Update on rule changes. When the underlying rule (HR policy, insurance contract, regulation) changes, update the Automated Reasoning policy. Auditors will check that policy version aligns with the rule effective date.

Common pitfalls

- Encoding incomplete rules. A policy that does not cover all the cases the LLM might be asked about gives false confidence — the LLM answers, the policy validates, but the policy does not actually cover the case. Test against the full Q&A space, not just the easy cases.

- Treating Automated Reasoning as a Guardrail replacement. Guardrails block harmful output; Automated Reasoning validates rule-correctness. Run both.

- Skipping the test corpus. Policy correctness is measured against a corpus, not by inspection. Build the corpus before you deploy.

- Forgetting policy versioning at the rule-change boundary. The HR policy updates in October; the Automated Reasoning policy must update too. Auditors will check.

- Ignoring latency impact in real-time chat. 200-500 ms is fine for back-office workflows, possibly noticeable in real-time chat — verify with the actual user-experience flow.

Where to go next

- Read the Amazon Bedrock Automated Reasoning checks documentation.

- Pair with Bedrock Guardrails in production for the full safety stack.

- Pair with GenAI guardrails secure AI governance for the broader AI governance pattern.

- Browse the AWS Security & Compliance hub and the AI Security subtopic.

Automated Reasoning is the missing layer for regulated AI deployments — the answer to “how do you know the model is right?” with mathematical proof rather than statistical confidence. Most teams discover the value when their first contextual-grounding-only chatbot produces a confident wrong answer that becomes a liability case. Build it in from day one when the answer is a determination that matters.

AWS Cloud Architect & AI Expert

AWS-certified cloud architect and AI expert with deep expertise in cloud migrations, cost optimization, and generative AI on AWS.