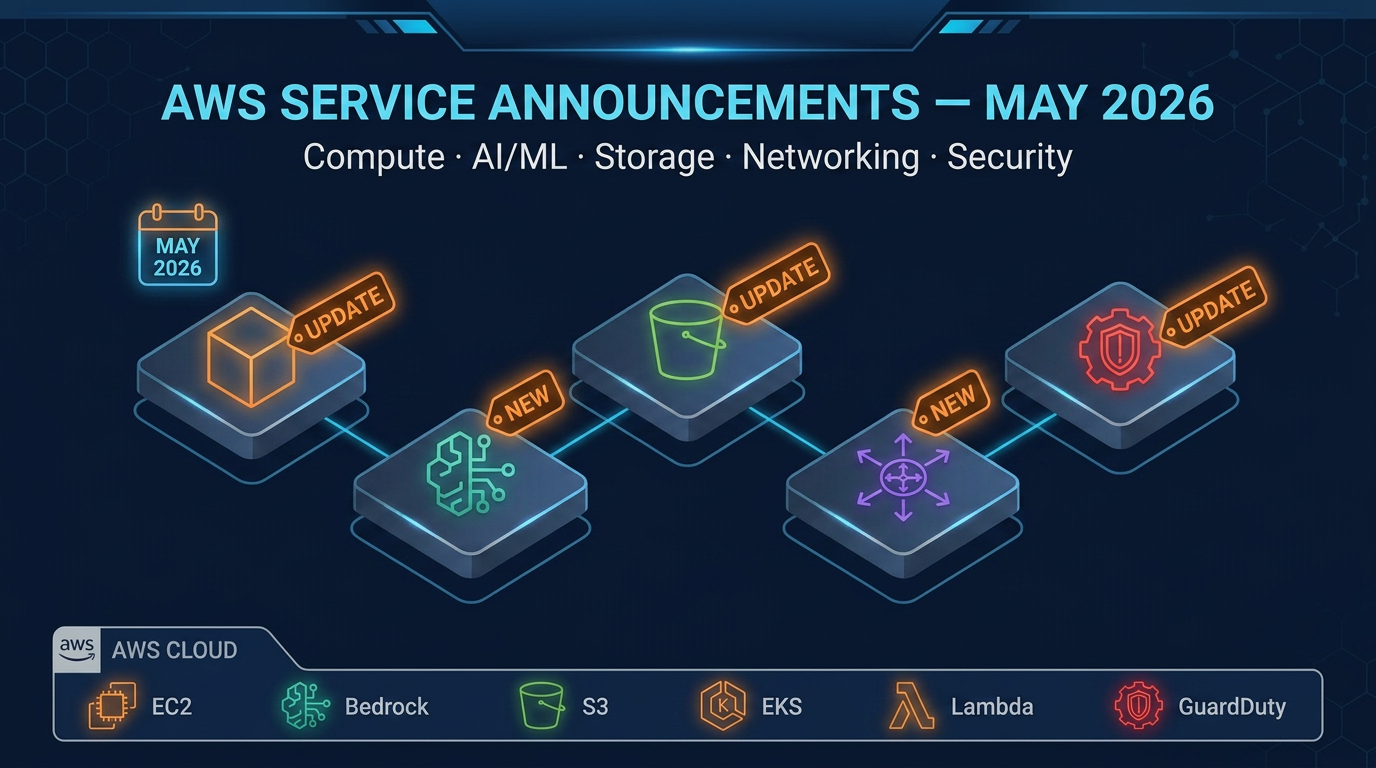

AWS Service Announcements: May 2026 Roundup

Quick summary: The most important AWS service announcements from May 2026 — covering compute, AI/ML, storage, networking, and security updates that affect production workloads.

Key Takeaways

- The most important AWS service announcements from May 2026 — covering compute, AI/ML, storage, networking, and security updates that affect production workloads

- The most important AWS service announcements from May 2026 — covering compute, AI/ML, storage, networking, and security updates that affect production workloads

Table of Contents

AWS released a substantial set of updates in May 2026 across compute, AI/ML, containers, storage, networking, and security. Not every announcement warrants architectural changes, but several have direct implications for production costs and operational posture. This roundup covers the eight announcements your team needs to evaluate.

1. Compute — New EC2 Graviton4-Based Instance Families Now Generally Available

What changed: AWS expanded Graviton4-based instance availability with two new families targeting memory-optimized and high-performance computing use cases. The new memory-optimized variant offers larger instance sizes than previously available, and pricing follows the standard Graviton discount pattern relative to equivalent Intel-based instances.

Why it matters: Graviton4 workloads have consistently shown 20–40% better price-performance for many server-side applications compared to x86 equivalents. Teams running large Java, Go, or containerized Python workloads on r6i or r6a instances should benchmark against the new family. The larger sizes remove a previous constraint that forced some workloads onto non-Graviton memory-optimized options.

What this means for your workloads: Run a Cost Explorer analysis on your current r6i/r6a spend. If memory-optimized instances account for more than 15% of your EC2 bill, a migration evaluation is warranted. Test with a single instance type change in a staging Auto Scaling group before fleet-wide rollout.

2. Compute — Lambda Increased Default Concurrency Limits and Faster Cold Starts

What changed: AWS raised default Lambda concurrent execution limits for new accounts, and announced improvements to cold start initialization times for runtimes including Python 3.12, Node.js 22, and Java 21 with SnapStart. The improvements are automatic — no configuration changes required.

Why it matters: Teams using Lambda for latency-sensitive workloads such as API backends or real-time event processing have historically provisioned concurrency to avoid cold starts, adding cost. The cold start improvements reduce the need for provisioned concurrency in many cases. The higher default limits reduce friction for teams scaling event-driven architectures without having to submit service limit increase requests.

What this means for your workloads: Review your provisioned concurrency settings for functions where you enabled it primarily for cold start mitigation rather than guaranteed throughput. You may be able to reduce provisioned concurrency and rely on standard on-demand invocations, cutting costs without sacrificing user-facing latency.

3. AI/ML — Amazon Bedrock Adds Cross-Region Inference Routing with Automatic Failover

What changed: Bedrock now supports cross-region inference routing, allowing applications to automatically route inference requests to a secondary region when a model is throttled or unavailable in the primary region. This is configured via a new inference profile construct without requiring application-level retry logic.

Why it matters: Bedrock throttling has been a real production pain point for teams with spiky inference demand. Previously, hitting a quota limit required either waiting or building complex retry and fallback logic in application code. Cross-region inference routing moves this complexity to the platform layer, improving application reliability and simplifying code.

What this means for your workloads: If you run production workloads on Bedrock and have experienced throttling errors during peak periods, configure cross-region inference profiles for your critical inference paths. Be aware of data residency requirements — ensure that your secondary region is compliant with any data sovereignty obligations before enabling cross-region routing.

4. AI/ML — SageMaker Unified Studio Adds Integrated MLflow Experiment Tracking

What changed: SageMaker Unified Studio now includes native MLflow experiment tracking, removing the need to self-host or integrate an external MLflow instance. Teams can log parameters, metrics, and model artifacts directly from training jobs into a managed MLflow tracking server provisioned within the Studio environment.

Why it matters: MLflow has become a de facto standard for ML experiment tracking, but running it in production requires infrastructure management — database backend, artifact store, networking, and upgrades. Managed MLflow within SageMaker eliminates that overhead. Teams already using SageMaker for training and deployment gain a complete experiment lifecycle in a single environment.

What this means for your workloads: If your data science team runs a self-managed MLflow server on EC2, evaluate migration to managed MLflow within SageMaker Unified Studio. Calculate the EC2 costs plus engineering time spent on MLflow maintenance. For most teams under 20 data scientists, the managed option is cheaper and simpler.

5. Containers — Amazon EKS Improves Node Provisioning Speed and Adds Karpenter v1 as Default

What changed: EKS now ships Karpenter v1 as the recommended default node provisioner for new clusters, replacing the older Cluster Autoscaler recommendation. AWS also announced improvements to EC2 node provisioning latency, reducing the time from pod scheduling to node ready state.

Why it matters: Karpenter v1 brings a stable, production-ready API surface after a long period in beta. It provides more flexible node provisioning, bin-packing across multiple instance types, and faster scale-out compared to Cluster Autoscaler. Faster node provisioning directly affects time-to-scale for burst workloads — critical for event-driven systems and ML training jobs that need nodes quickly.

What this means for your workloads: Existing clusters running Cluster Autoscaler should evaluate migrating to Karpenter v1. The migration path is well-documented and has become standard practice. New cluster deployments should use Karpenter v1 from day one. Review Karpenter NodePool and EC2NodeClass CRDs to understand the v1 configuration changes from earlier beta versions.

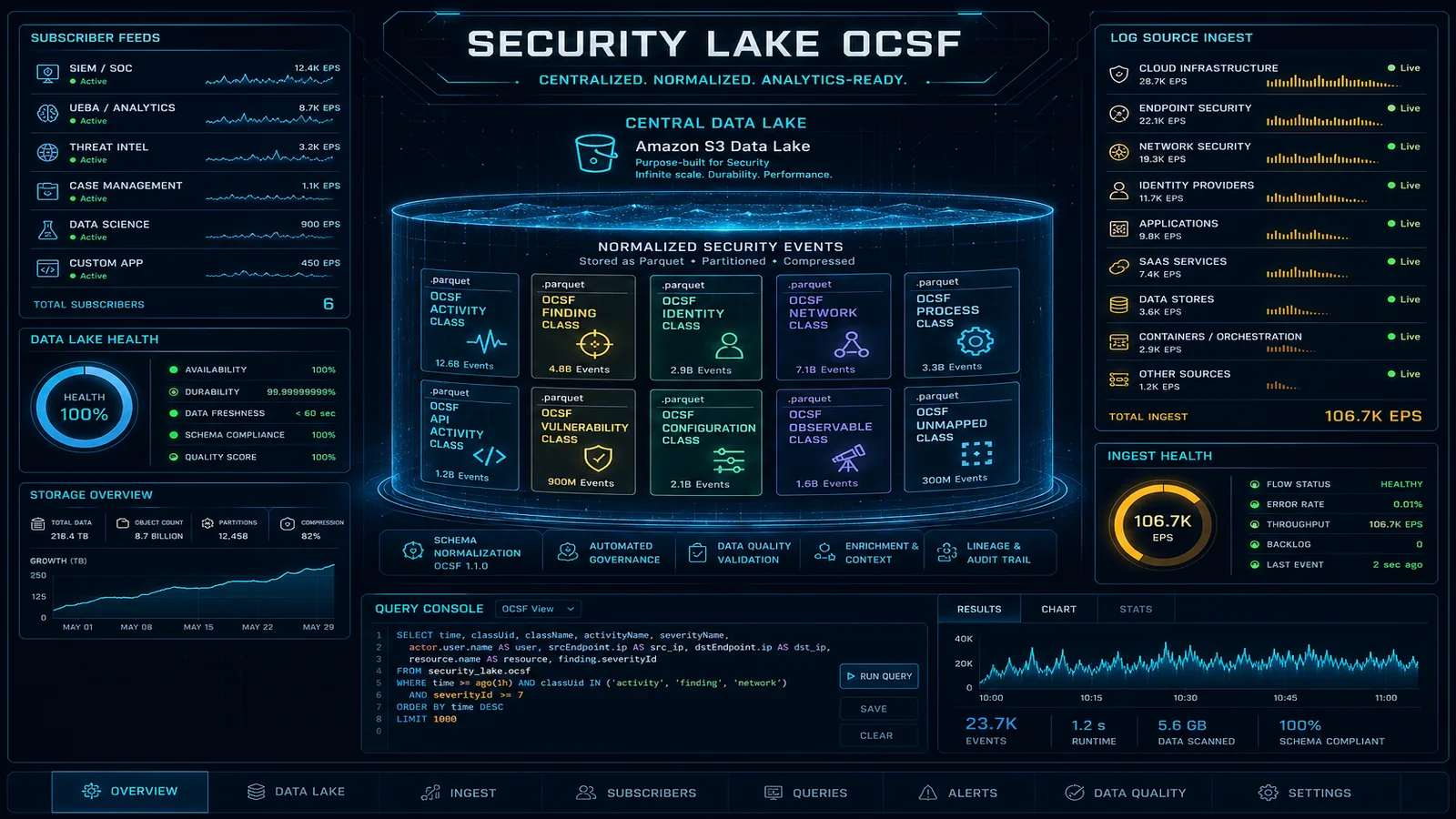

6. Storage — S3 Tables Generally Available with Iceberg-Native Support

What changed: Amazon S3 Tables reached general availability. S3 Tables provides purpose-built S3 buckets optimized for Apache Iceberg table storage, with automatic compaction, snapshot management, and metadata layer maintenance handled by AWS. Integration with Athena, Redshift, EMR, and Glue is native.

Why it matters: Managing Iceberg tables on standard S3 requires running compaction jobs, managing expired snapshots, and handling metadata layer scaling — operational overhead that often falls on data engineering teams as an afterthought. S3 Tables offloads this to AWS, reducing the engineering time required to maintain a performant Iceberg data lake. Query performance improves because compaction runs automatically rather than only when someone remembers to trigger it.

What this means for your workloads: If you have Iceberg tables on standard S3 where compaction runs are inconsistent or skipped, migrating to S3 Tables removes that maintenance burden. Evaluate tables where query latency has been increasing over time due to small file accumulation — these are the best candidates for migration.

7. Networking — AWS Network Firewall Adds TLS Inspection for Egress Traffic

What changed: AWS Network Firewall now supports TLS inspection for egress traffic, allowing you to decrypt, inspect, and re-encrypt outbound HTTPS connections. This enables detection of data exfiltration, malware command-and-control communication, and policy violations over encrypted channels.

Why it matters: Without TLS inspection, encrypted outbound traffic is opaque to network security controls. Attackers can and do exfiltrate data over HTTPS to evade detection. For organizations in regulated industries or with strict data loss prevention requirements, egress TLS inspection closes a significant visibility gap. Previously, achieving this required deploying a third-party inspection appliance.

What this means for your workloads: Review your compliance and security requirements. If your workloads handle sensitive data and you have no visibility into encrypted outbound traffic, enabling egress TLS inspection is a meaningful security improvement. Note that TLS inspection adds latency and requires certificate management — plan the PKI configuration carefully before enabling in production.

8. Security — IAM Access Analyzer Adds Automated Remediation Suggestions for Overly Permissive Policies

What changed: IAM Access Analyzer now generates specific, actionable remediation suggestions for overly permissive IAM policies identified during analysis. Rather than flagging a policy as overly broad, it generates a replacement policy scoped to only the actions and resources actually used in the past 90 days, based on CloudTrail data.

Why it matters: IAM least-privilege has long been best practice but is difficult to implement retroactively. Generating a correctly scoped replacement policy manually requires analyzing CloudTrail logs and cross-referencing IAM actions — time-consuming work that rarely gets prioritized. Automated suggestions make least-privilege remediation actionable for teams that have been deferring it.

What this means for your workloads: Run Access Analyzer on your accounts with the new remediation suggestion feature enabled. Prioritize reviewing suggestions for roles attached to EC2 instances, Lambda functions, and ECS tasks — these are the highest-risk surfaces if compromised. Treat the suggested policies as a starting point and verify them in a staging environment before applying to production.

Staying Current with AWS Releases

AWS publishes updates across dozens of services continuously. For teams managing production infrastructure, the challenge is not finding announcements — it is triaging them to identify what actually requires action.

The highest-leverage filter: focus on services you are actively using and services that affect security posture. Cost-impacting changes (new instance types, pricing tier changes) are worth evaluating quarterly. Security changes (new IAM capabilities, encryption options, compliance scope) warrant faster review.

If your team lacks the bandwidth to stay current with AWS releases and evaluate their impact on your infrastructure, that is a signal worth paying attention to. FactualMinds helps organizations track relevant AWS changes and translate them into infrastructure improvements. Reach out through our AWS Managed Services page to discuss ongoing support options.

AWS Cloud Engineering

The FactualMinds engineering and consulting team — AWS-certified architects and engineers building production cloud infrastructure for organizations across industries. As an AWS Select Tier Consulting Partner, we bring hands-on expertise in serverless, security, data analytics, cost optimization, and cloud migration.